This question was raised on this blog back in January this year in the post Tough Questions About MDM.

Since then the use of the term blockchain has been used more and more in general and related to Master Data Management (MDM). As you know, we love new fancy terms in our else boring industry.

However, there are good reasons to consider using the blockchain approach when it comes to master data. A blockchain approach can be coined as centralized consensus, which can be seen as opposite to centralized registry. After the MDM discipline has been around for more than a decade, most practitioners agree that the single source of truth is not practically achievable within a given organization of a certain size. Moreover, in the age of business ecosystems, it will be even harder to achieve that between trading partners.

However, there are good reasons to consider using the blockchain approach when it comes to master data. A blockchain approach can be coined as centralized consensus, which can be seen as opposite to centralized registry. After the MDM discipline has been around for more than a decade, most practitioners agree that the single source of truth is not practically achievable within a given organization of a certain size. Moreover, in the age of business ecosystems, it will be even harder to achieve that between trading partners.

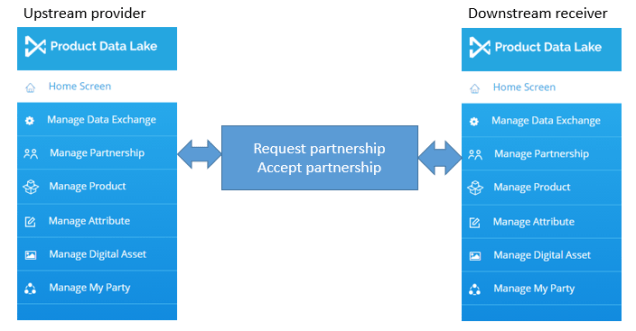

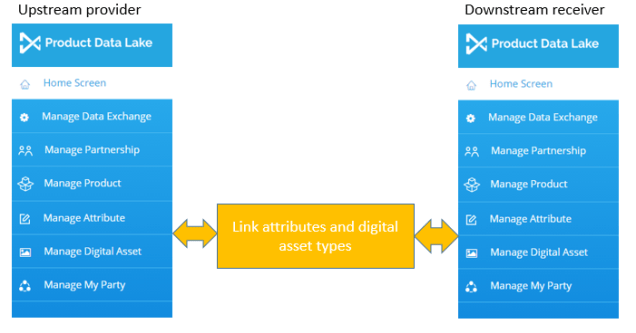

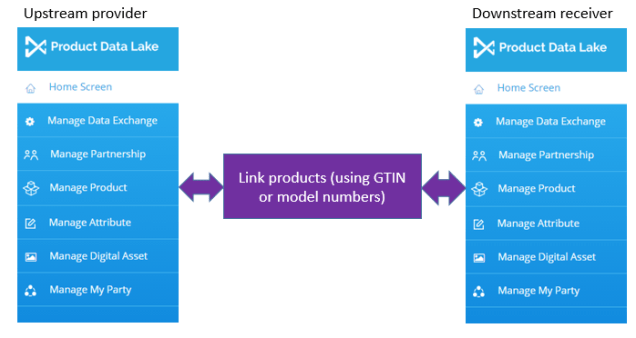

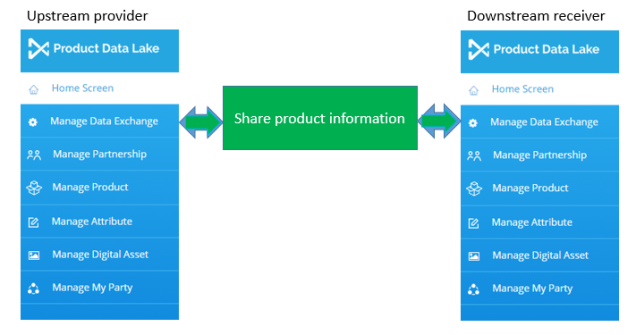

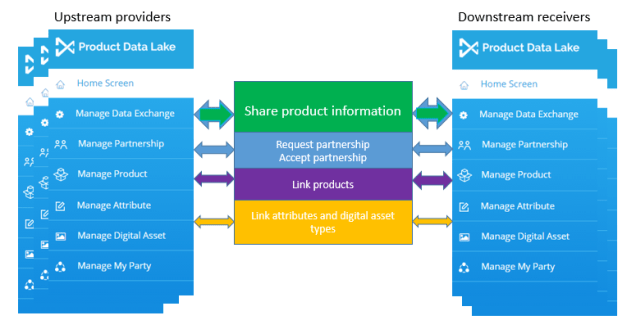

This way of thinking is at the backbone of the MDM venture called Product Data Lake I’m working with right now. Yes, we love buzzwords. As if cloud computing, social network thinking, big data architecture and preparing for Internet of Things wasn’t enough, we can add blockchain approach as a predicate too.

In Product Data Lake this approach is used to establish consensus about the information and digital assets related to a given product and each instance of that product (physical asset or thing) where it makes sense. If you are interested in how that develops, why not follow Product Data Lake on LinkedIn.

Data Governance

Data Governance In

In  Become a:

Become a:

But the market analysis and the trends observed is good stuff as well.

But the market analysis and the trends observed is good stuff as well.

All these solutions constitutes one of the leading Multi-Domain MDM offerings on the market – if not the leading. We will be wiser on that question when Gartner (the analyst firm) makes their first Multi-Domain MDM Magic Quadrant later this year as reported in the post

All these solutions constitutes one of the leading Multi-Domain MDM offerings on the market – if not the leading. We will be wiser on that question when Gartner (the analyst firm) makes their first Multi-Domain MDM Magic Quadrant later this year as reported in the post