What is data quality anyway? This question has been touched many times on this blog.

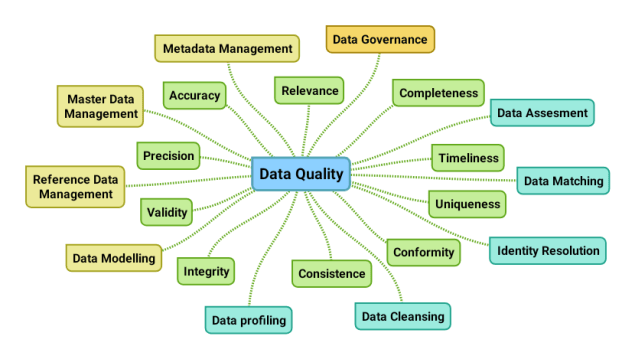

Data quality can be assessed using a range of data quality dimensions – the ones coloured green in the above mind map. These dimensions relate in different ways to various data domains as examined in the post Multi-Domain MDM and Data Quality Dimensions.

Data quality can be managed using a toolbox of sub disciplines – as the ones coloured turquoise in the above mind map. The reasons for data cleansing was discussed in the blog post Top 5 Reasons for Downstream Cleansing. Data profiling was visited in the post Data Quality Tools Revealed along with data matching. The relationship between data matching and identity resolution was recently described in the post Data Matching and Real-World Alignment.

The data quality discipline is closely related to – the yellow coloured – other disciplines as data modelling, Reference Data Management (RDM), Master Data Management (MDM), metadata management and – if not a sub discipline of – data governance as also shown in the post A Data Management Mind Map.

While this quote rightfully emphasizes on that a lot of money is at stake, the quote itself holds a full load of data and information quality issues.

While this quote rightfully emphasizes on that a lot of money is at stake, the quote itself holds a full load of data and information quality issues. A pearl is a popular gemstone. Natural pearls, meaning they have occurred spontaneously in the wild, are very rare. Instead, most are farmed in fresh water and therefore by regulation used in many countries must be referred to as cultured freshwater pearls.

A pearl is a popular gemstone. Natural pearls, meaning they have occurred spontaneously in the wild, are very rare. Instead, most are farmed in fresh water and therefore by regulation used in many countries must be referred to as cultured freshwater pearls.

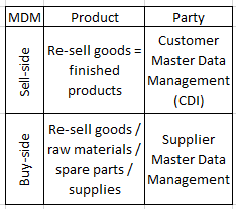

You may argue that PIM (Product Information Management) is not the same as Product MDM. This question was examined in the post

You may argue that PIM (Product Information Management) is not the same as Product MDM. This question was examined in the post  Doing business process improvement most often involves master data as examined in the post

Doing business process improvement most often involves master data as examined in the post