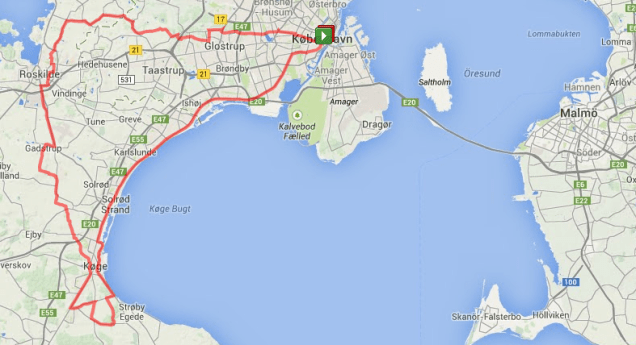

Today we have an Ironman passing through the streets of Copenhagen (and my breakfast). Kudos to the women and men who first have been on a swim lane of 3.86 km (2.4 miles), now is cycling 180.25 km (112 miles) and then will run a full Marathon of 42.2 km (26.22 miles).

Today we have an Ironman passing through the streets of Copenhagen (and my breakfast). Kudos to the women and men who first have been on a swim lane of 3.86 km (2.4 miles), now is cycling 180.25 km (112 miles) and then will run a full Marathon of 42.2 km (26.22 miles).

Thinking about it doing product data management is a bit like an Ironman too. Overall it is a daunting task. And we have three disciplines to cover:

- Digital Asset Management (DAM) is an activity where many organizations start. It is about handling product images in various sizes and versions along the way, as well as, depending on the product category, installation guides, line drawings, data sheets and other documents. Also videos with that and other content is becoming popular.

- Product Information Management (PIM) is about maintaining hundreds (sometimes thousands) of different attributes describing a product. Some of these attributes are common for most products (like height, weight and colour) and some are very specific for a given product category.

- Master Data Management (MDM) is a Marathon in itself. Here you link the above product data with product data in the overall system landscape including ERP, SCM (Supply Chain Management) and PLM (Product Lifecycle Management). Product data also forms the product domain that must be aligned with the location domain, asset domain, party domain and perhaps other domains in your MDM world.

How these disciplines stick together within your organization and your digital ecosystem was further examined in the post How MDM, PIM and DAM Stick Together.

Data Lake

Data Lake