While a lot have been written about the original 99 articles in the EU General Data Protection Regulation (GDPR) perhaps we have seen less focus on the additional articles that have been added since the first approval of 14th April 2016, but that will as well apply on 25th May 2018.

The original articles are mainly emphasizing on the rights of the data subject. But later additions also take care of the right of data controllers. Not at least article 101 about the right to indulgence for data controllers (and data processors) falls into that category.

A representative of the EU, Don Par, explains it this way: “You can escape the heavy fines if you are about to be caught by confessing your sins and pay a more modest purification fee which eventually by subscribing to an annual scheme may allow you to continue business as usual”.

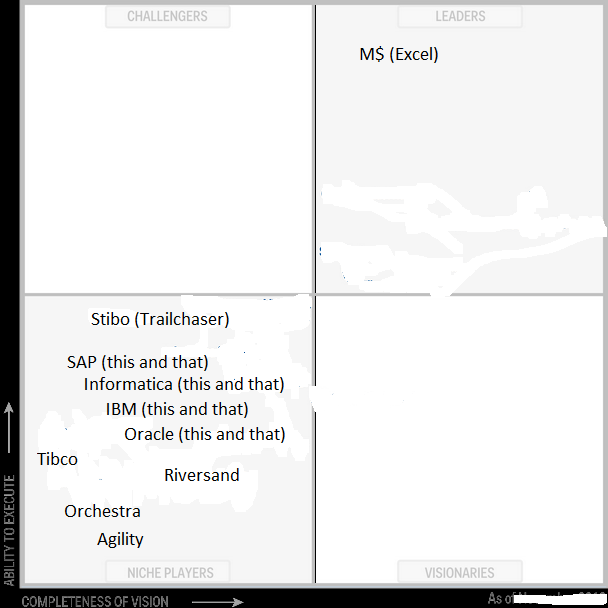

The consultancy industry also has had a low profile on article 101. Max Hagnaður of The GDPR Advisory Institute puts it this way: “First we wanted to make money on advising on how to comply with GDPR. When it shows up, that only a very few companies will do so, we will redirect our hailstorms of power-point decks to article 101 and how to apply for indulgence”.

The consultancy industry also has had a low profile on article 101. Max Hagnaður of The GDPR Advisory Institute puts it this way: “First we wanted to make money on advising on how to comply with GDPR. When it shows up, that only a very few companies will do so, we will redirect our hailstorms of power-point decks to article 101 and how to apply for indulgence”.

Indeed, the costs of indulgence may very well be lesser than the costs of compliance (not to say fines because you will fail anyway).

During the last couple years social media have been floating with an image and a silly

During the last couple years social media have been floating with an image and a silly  An upcoming executive order will enforce a tall and beautiful wall around each data centre. It’s true.

An upcoming executive order will enforce a tall and beautiful wall around each data centre. It’s true. a lot of consequences in our life and the next big reform is the end of the time zones.

a lot of consequences in our life and the next big reform is the end of the time zones. The founder of The Matching Institute is Alexandra Duplicado. Aleksandra says: “The reason I founded The Institute of Data Matching is that I am sick and tired of receiving duplicate letters with different spellings of my name and address”. Alex is also pleased about, that she now have found a nice office in edit distance of her home.

The founder of The Matching Institute is Alexandra Duplicado. Aleksandra says: “The reason I founded The Institute of Data Matching is that I am sick and tired of receiving duplicate letters with different spellings of my name and address”. Alex is also pleased about, that she now have found a nice office in edit distance of her home.