One of the ways to ensure data quality for customer – or rather party – master data when operating in a business-to-business (B2B) environment, is to on-board new entries using an external defined business entity identifier.

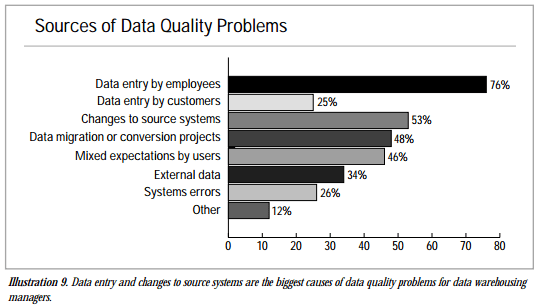

By doing that, you tackle some of the most challenging data quality dimensions as:

- Uniqueness, by checking if a business with that identifier already exist in your internal master data. This approach is superior to using data matching as explained in the post The Good, Better and Best Way of Avoiding Duplicates.

- Accuracy, by having names, addresses and other information defaulted from a business directory and thus avoiding those spelling mistakes that usually are all over in party master data.

- Conformity, by inheriting additional data as line-of-business codes and descriptions from a business directory.

Having an external business identifier stored with your party master data helps a lot with maintaining data quality as pondered in the post Ongoing Data Maintenance.

When selecting an identifier there are different options as national IDs, LEI, DUNS Number and others as explained in the post Business Entity Identifiers.

When selecting an identifier there are different options as national IDs, LEI, DUNS Number and others as explained in the post Business Entity Identifiers.

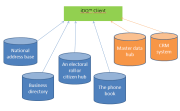

At the Product Data Lake service I am working on right now, we have decided to use an external business identifier from day one. I know this may be something a typical start-up will consider much later if and when the party master data population has grown. But, besides being optimistic about our service, I think it will be a win not to have to fight data quality issues later with guarantied increased costs.

For the identifier to use we have chosen the DUNS Number from Dun & Bradstreet. The reason is that this currently is the only worldwide covered business identifier. Also, Dun & Bradstreet offers some additional data that fits our business model. This includes consistent line-of-business information and worldwide company family trees.

If we look at customer, or rather party, Master Data Management (MDM) it is much about real world alignment. In party master data management you describe entities as persons and legal entities in the real world and you should have descriptions that reflect the current state (and sometimes historical states) of these entities. Some reflections will be

If we look at customer, or rather party, Master Data Management (MDM) it is much about real world alignment. In party master data management you describe entities as persons and legal entities in the real world and you should have descriptions that reflect the current state (and sometimes historical states) of these entities. Some reflections will be