El Festival del IDQ Bloggers is another name for the monthly recurring post of selected (actually rather submitted) blog posts on information and data quality started last year by the IAIDQ.

This is the February 2010 edition covering posts published in December 2009 and January 2010.

I will go straight to the point:

Daragh O Brien shared the story about a leading Irish Hospital that has come under scrutiny for retaining data without any clear need. This highlights an important relationship between Data Protection/Privacy and Information Quality. Daragh’s post explores some of this relationship through the “Information Quality Lense”. Here’s the story: Personal Data – an Asset we hold on Trust.

Former Publicity Director of the IAIDQ, Daragh has over a decade of coal-face experience in Information Quality Management at the tactical and strategic levels from the Business perspective. He is the Taoiseach (Irish for chieftain) of Castlebridge Associates. Since 2006 he has been writing and presenting about legal issues in Information Quality amongst other topics.

Jim Harris is an independent consultant, speaker, writer and blogger with over 15 years of professional services and application development experience in data quality. Obsessive-Compulsive Data Quality is an independent blog offering a vendor-neutral perspective on data quality.

If you are a data quality professional, know the entire works by Shakespeare by heart and are able to wake up at night and promptly explain the theories of Einstein you probably know Jim’s blogging. On the other hand: If you don’t know Shakespeare, don’t understand Einstein, then: Jim to the rescue. Read The Dumb and Dumber Guide to Data Quality.

If you are a data quality professional, know the entire works by Shakespeare by heart and are able to wake up at night and promptly explain the theories of Einstein you probably know Jim’s blogging. On the other hand: If you don’t know Shakespeare, don’t understand Einstein, then: Jim to the rescue. Read The Dumb and Dumber Guide to Data Quality.

In another post Jim discusses the out-of-box-experience (OOBE) provided by data quality (DQ) software under the title: OOBE-DQ, Where Are You? Jim also posted part 8 of Adventures in Data Profiling – a great series of knowledge sharing on this important discipline within data quality improvement.

Phil Wright is a consultant based in London, UK who specialises in Business Intelligence and Data Quality Management. With 10 years experience within the Telecommunications and Financial Services Industries, Phil has implemented data quality management programs, led data cleansing exercises and enabled organisations to realise their data management strategy.

The Data Factotum blog is a new blog in the Data Quality blogosphere, but Phil has kick started with 9 great posts during the first month. A balanced approach to scoring data quality is the start of a series on the topic of using the balanced scoreboard concept in measuring data quality.

Jan Erik Ingvaldsen is a colleague and good friend of mine. In a recent market competition scam cheap flight tickets from Norwegian Air Shuttle was booked by employees from competitor Cimber Sterling using all kinds of funny names. As usual Jan Erik not only has a nose for a good story but he is also able to propose the solutions as seen here in Detecting Scam and Fraud.

Jan Erik Ingvaldsen is a colleague and good friend of mine. In a recent market competition scam cheap flight tickets from Norwegian Air Shuttle was booked by employees from competitor Cimber Sterling using all kinds of funny names. As usual Jan Erik not only has a nose for a good story but he is also able to propose the solutions as seen here in Detecting Scam and Fraud.

In his position as Nordic Sales Manager at Omikron Data Quality Jan Erik actually is a frequent flyer at Norwegian Air Shuttle. Now he is waiting whether he will be included on their vendor list or on the no-fly list.

William Sharp is a writer on technology focused blogs with an emphasis on data quality and identity resolution.

Informatica Data Quality Workbench Matching Algorithms is part of a series of postings were William details the various algorithms available in Informatica Data Quality (IDQ) Workbench. In this post William start by giving a quick overview of the algorithms available and some typical uses for each. The subsequent postings gets more detailed and outline the math behind the algorithm and will finally be finished up with some baseline comparisons using a single set of data.

Personally I really like this kind of ready made industrial espionage.

IQTrainwrecks hosted the previous blog carnival edition. From this source we also has a couple of postings.

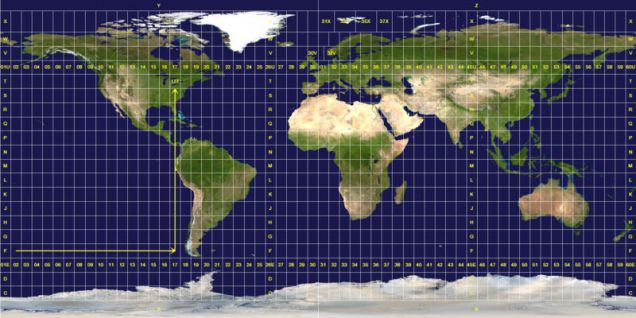

The first was submitted by Grant Robinson, the IAIDQ’s Director of Operations. He shares an amusing but thought provoking story about the accuracy of GPS systems and on-line maps based on his experiences working in Environmental Sciences. Take a dive in the ocean…

Also it is hard to avoid including the hapless Slovak border police and their accidental transportation of high explosives to Dublin due to a breakdown in communication and a reliance on inaccurate contact information. Read all about it.

And finally, we have the post about the return of the Y2k Bug as systems failed to properly handle the move into a new decade, highlighting the need for tactical solutions to information quality problems to be kept under review in a continuous improvement culture in case the problem reoccurs in a different way. Why 2K?

If you missed them, here’s a full list of previous carnival posts:

April 2009 on Obsessive-Compulsive Data Quality by Jim Harris

May 2009 on The DOBlog by Daragh O Brien

June 2009 on Data Governance and Data Quality Insider by Steve Sarsfield

July 2009 on AndrewBrooks.co.uk by Andrew Brooks

August 2009 on The DQ Chronicle by William E Sharp

September 2009 on Data Quality Edge by Daniel Gent

October 2009 on Tooling around in the IBM Infosphere by Vincent McBurney

November 2009 on IQTrainwrecks.com by IAIDQ

55.580294

12.282991

While deduplication and identity resolution may be treated as polar opposites and seemingly contrary disciplines they are in my eyes interconnected and interdependent. Yin and Yang Data Quality.

While deduplication and identity resolution may be treated as polar opposites and seemingly contrary disciplines they are in my eyes interconnected and interdependent. Yin and Yang Data Quality.