We are often talking about big data as if it is one kind of data while in fact we need separate approaches to handling for example data quality issues with different sorts of big data.

In the following I will go through some different types of big data and share some observations related to data quality.

Social data

The most mentioned type of big data I guess is social data and the opportunity to listen to Twitter streams and Facebook status updates in order to get better customer insight is an often stated business case for analyzing big data.

However, everyone who listens to those data will be aware of the tremendous data quality problems in doing that as told in the post Crap, Damned Crap and Big Data.

Sensor data

Another often mentioned type of big data is sensor data and as examined in the post Social Data vs Sensor Data these are somewhat different from social data with less complex data quality issues but not in all free of data quality flaws as reported in the post Going in the Wrong Direction.

Web logs

Following the clicks from people surfing the internet is a third type of big data. This kind of big data shares characteristics from both social data and sensor data as they are human generated as social data but more fact oriented as sensor data.

Big transaction data

Even traditional transaction data in huge volume are treated as big data but of course inherits the same data quality challenges as all transaction data as even that data are structured we may have trouble with having the right relations to the who, what, where and when in the transactions. And that isn’t easier with large volumes.

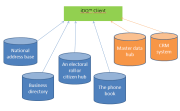

Big reference data

When reference data grows big we also meet big complexity. Try for example to build a reference data set with all the valid postal addresses in the world. Several standardizing bodies have a hard time making a common model for that right now. Learn about other examples of big reference data and the related complexity in the post Big Reference Data Musings.