Welcome in the class room to Rick Buijserd from The Netherlands as the next guest blog post author:

As a child you were happy when the bell ranged and the school day ended. It was time to play with your friends and don’t think about learning anymore, just play! Most of us look back at this time as the best time of our lives. A time without any worries and enjoying every moment of it. Even though it wasn’t the main focus as a child it was also the time that we learned new ideas and things every day. Are we still learning every day? Are you learning new things about data management every day? You should and here is why…

Gaining knowledge

Data is the new oil and many of us make a decent living by advising or consulting companies in this area of expertise. But when time goes by so are the developments and in the technology world this goes fast, very fast. In the last couple of years the data environment has become bigger and bigger. First there was just data in companies, now you have the combine sources of data to get a clear view about. And the sources keep on changing. Big data used to be a word that was undefined and unable to use. And for many it still is, but others use big data to enrich and enable growth for their companies. By just summing this up you see the changes that happened in the last couple of years and you have to keep up to stay relevant. Learn and gain knowledge is the only key to success in the long term. Artificial Intelligence and Machine Learning powered by optimal use of data and data management will take over many tasks but in the end human creativity and the ability to learn will provide success and the power to make the difference.

Data Management is never finished and neither is learning about it

As you have been in the world of data management you should know that data management is never finished and so is the possibility of gaining knowledge. New books about data management are published recently, research firms keep on researching and find new discoveries. And many companies use the evolution of the technology to grow. Also Communities are built around topics on many different platforms. The possibility to learn is everywhere! Use it in your benefit, data management is never finished…

Rick Buijserd is author and owner of the platform Data Management Experts and a young professional with experience in the world of data. He started his career at a well-known software vendor as channel manager where he learned the skills of indirect sales and managing partners. Financial, HR, Logistics, Warehousing and PSA were the main elements of his software sales. Building relationships with experts and other vendors are part of his DNA.

Rick Buijserd is author and owner of the platform Data Management Experts and a young professional with experience in the world of data. He started his career at a well-known software vendor as channel manager where he learned the skills of indirect sales and managing partners. Financial, HR, Logistics, Warehousing and PSA were the main elements of his software sales. Building relationships with experts and other vendors are part of his DNA.

After a couple of years he decided to make a switch and landed in the world of accountancy firms. In this period he enabled himself to become a trusted advisor of many accountancy firms in The Netherlands. The area of finance, financial reporting, tax, auditing and other accountancy related activities are no secret to him. Together with his clients he developed many solutions to solve their challenges. In this period the love for data management came above. Accountancy firms are the ultimate example of being data driven. It is all they know.

After a couple of years he decided to make a switch and landed in the world of accountancy firms. In this period he enabled himself to become a trusted advisor of many accountancy firms in The Netherlands. The area of finance, financial reporting, tax, auditing and other accountancy related activities are no secret to him. Together with his clients he developed many solutions to solve their challenges. In this period the love for data management came above. Accountancy firms are the ultimate example of being data driven. It is all they know.

In the most recent period of his career he stepped into the world of multinationals and as off today he is still active in this world advising around data management and selling software solutions to multinationals who have challenges in the area of data management. Also he is an expert in the area of social selling via LinkedIn and this knowledge has been brought into practice via a LinkedIn Group for Dutch Data Management Experts in which he gathers the top data management experts from the largest companies in The Netherlands to discuss all kind of data related topics.

Now, the focal point of Product Data Lake is not the exciting world of address data quality, but product data quality.

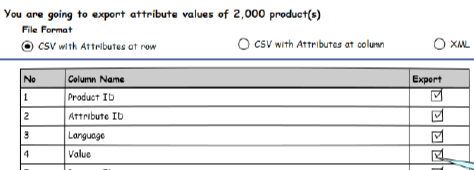

Now, the focal point of Product Data Lake is not the exciting world of address data quality, but product data quality. This resonates very well with my findings. Very low practical this means that you will not win by translating all product descriptions into English. Even the metadata has to be multilingual, as you will interact with trading partners using different languages. While one public standard for product information may be king in one region, this will most likely not be the case in another region, which again effects how you collaborate with trading partners in different geographies.

This resonates very well with my findings. Very low practical this means that you will not win by translating all product descriptions into English. Even the metadata has to be multilingual, as you will interact with trading partners using different languages. While one public standard for product information may be king in one region, this will most likely not be the case in another region, which again effects how you collaborate with trading partners in different geographies. Many implementations starts with a national scope and we also see many tools and services built for a national scope. Success on a national scale does unfortunately not always guarantee success on an international scale.

Many implementations starts with a national scope and we also see many tools and services built for a national scope. Success on a national scale does unfortunately not always guarantee success on an international scale.

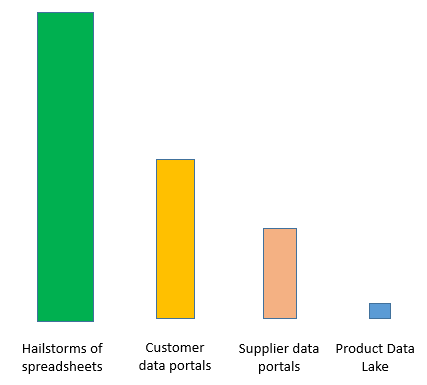

Data Lake

Data Lake

When selecting an identifier there are different options as national IDs, LEI, DUNS Number and others as explained in the post

When selecting an identifier there are different options as national IDs, LEI, DUNS Number and others as explained in the post