What does the future for data and the need for power when travelling have in common? A lot, as Ken O’Connor explains in today’s guest blog post:

Bob Lambert wrote an excellent article recently summarising the New Direction for Data set out at Enterprise Data World 2017 (#EDW17). As Bob points out “Those (organisations) that effectively manage data perform far better than organisations that don’t”. A key theme from #EDW17 is for data management professionals to “be positive” and to focus on the business benefits of treating data as an asset. On a related theme, Henrik on this blog has been highlighting the emergence and value to be derived from business ecosystems and digital platforms.

Building on Bob and Henrik’s ideas, I believe we need a paradigm shift in the way we think and talk about data. We need to promote the business benefits of data sharing via “Plug and Play Data”.

When we travel, we expect to be able to use our mobile devices anywhere in the world. We do this by using universal adaptors that convert country specific plug shapes and power levels for us.

When we travel, we expect to be able to use our mobile devices anywhere in the world. We do this by using universal adaptors that convert country specific plug shapes and power levels for us.

We need to apply the same concept to data. To enable data to be more easily reused across and between enterprises, we need to create “plug and play data”.

How can organisations create “plug and play data”?

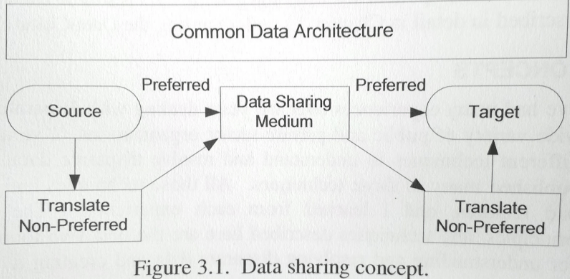

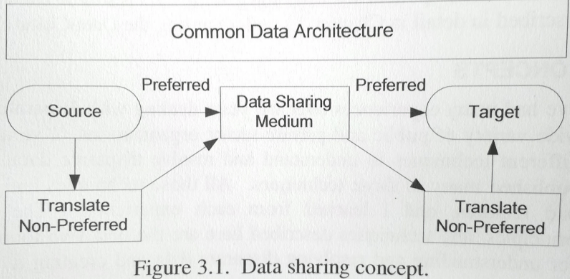

In the past, organisations could simply verify that the data they create / capture / ingest and share conforms to the business rules for their own organisation. That “silo-based” approach is no longer tenable. In today’s world, as Henrik points out, organisations increasing play a role within a business ecosystem, as part of a data supply chain. Hence they need to exchange data with business partners. To do this, they need to apply a “Data Sharing Concept” within a “Common Data Architecture” as set out by Michael Brackett in his excellent books “Data Resource Simplexity” and “Data Resource Integration”. Michael describes a “Data Sharing Medium”, which is similar in concept to the universal adaptor above. For data sharing, this involves organisations within a given business ecosystem agreeing a “preferred form” for data sharing.

I quote Michael “The Common Data Architecture provides a construct for readily sharing data. When the source data are not in the preferred form, the source organisation must translate those non-preferred data to the preferred form before being shared over the data sharing medium. Similarly, when the target organisation uses the preferred data, they can be readily received from the data sharing medium. When the target organisation does not use preferred data, they must translate the preferred data to their non-preferred form. The “data sharing concept” states that shared data are transmitted over the data sharing medium as preferred data. Any organisation, whether source or target, that does not have or use data in the preferred form is responsible for translating the data.

In conclusion:

We Data Management Professionals need to educate both Business and IT on the need for, and the benefits of “plug and play data”. We need to help business leaders to understand that data is no longer used by just one business process. We need to explain that even tactical solutions within Lines of Business need to consider Enterprise and business ecosystem demands for data such as:

- Data feed into regulatory systems

- Data feeds to and from other organisations in the supply chain

- Ultimate replacement of application with newer generation system

We must educate the business on the increasingly dynamic information requirements of the Enterprise and beyond – which can only be satisfied creating “plug and play data” that can be easily reused and interconnected.

Ken O’Connor is an independent consultant with extensive experience helping multi-national organisations satisfy the Data Quality / Data Governance requirements of regulatory compliance programmes such as GDPR, Solvency II, BASEL II/III, Anti-Money Laundering, Anti-Fraud, Anti-Terrorist Financing and BCBS 239 (Risk Data Aggregation and Reporting).

Ken’s “Data Governance Health Check” provides an independent, objective assessment of your organisation’s internal data management processes to help you to identify gaps you may need to address to comply with regulatory requirements.

Ken is a founding board member of the Irish Data Management Association (DAMA) chapter. He writes a popular industry blog that regularly focuses on a wide range of data management issues faced by modern organisations: (Kenoconnordata.com).

You may contact Ken directly by emailing: Ken@Kenoconnordata.com

Internet of Things (IoT)

Internet of Things (IoT) The Sponsors

The Sponsors

I second that, having been working with the

I second that, having been working with the  The top right kind of platform is the ecosystem one. This kind of platform will facilitate how you interact with business partners.

The top right kind of platform is the ecosystem one. This kind of platform will facilitate how you interact with business partners.

The Competition and The Neutral Hub

The Competition and The Neutral Hub When we travel, we expect to be able to use our mobile devices anywhere in the world. We do this by using universal adaptors that convert country specific plug shapes and power levels for us.

When we travel, we expect to be able to use our mobile devices anywhere in the world. We do this by using universal adaptors that convert country specific plug shapes and power levels for us.