When working with master data management and data quality including data matching one of the most frequent pieces of information you work with is a postal code.

Wikipedia has a good article about postal code.

Wikipedia has a good article about postal code.

Some of the data quality issues related to the datum postal code are:

Metadata

Over the world different words are used for a postal code:

- ZIP code, the United States implementation of a postal code, is often used synonymously for a postal code in many databases and user interfaces. This is not seriously wrong, but not right either.

- In India a postal code (in English) is called a PIN Code (Postal Index Number). This could definitely trick me.

Format

There are basically two different formats of postal codes around:

- Numeric postal codes are the most common ones. The number of digits does however differ between countries. And there may be some additional considerations:

- For example the 9 digit United States ZIP code is split into the original 5 digits and the additional 4 digits implemented later.

- Postal codes may begin with 0 which may create formatting errors when treated as numeric.

- Some countries, for example the United Kingdom, the Netherlands, Canada and Argentina, have alphanumeric postal codes.

Embedded Information

Numeric postal codes usually forms some kind of hierarchy in which you can guess the geographical position within the country and make ranges representing smaller or larger geographical areas. But you never know.

This also goes for Dutch (you know, the ones in the Netherlands) postal codes as the first 4 characters are numeric.

The UK postal codes usually start with a mnemonic of the main city in the area, except in a lot of cases.

Precision

Some postal code systems have postal codes covering larger areas with many streets and some postal code systems are very granular where each street, or part of a street, has a distinct postal code.

The UK postal code system is very granular which have paved the way for using rapid addressing as told in a recent article on the UK Database Marketing Magazine.

Coverage

Utilizing rapid addressing requires that reference data for postal codes practically covers every spot in the country and updates are available on a near real time basis.

Some countries have postal code systems not covering every corner and some countries haven’t a postal code system at all.

Uniqueness

The main reason for implementing postal code systems is that a town or city name in many cases isn’t unique within a country.

But that doesn’t mean that uniqueness works the other way as well. A postal code may in many countries cover several town names. France is an example.

Consistency

While we basically have granular and not so granular postal code systems we of course also have hybrids.

In Denmark for example there is a granular system in the capital Copenhagen with a postal code for each street, named by the street, and a system in the rest of country with a postal code for an area named by the suburban or town.

Fit for purpose

A postal code is a hierarchical element in a postal address. We basically have two forms of postal addresses:

- A geographical address where the postal address including the postal code points to place you also can visit and meet the people receiving the things sent to there

- A post-office box which may have more or less geographical connection to where the people receiving the things sent to there are

Penetration of post-office boxes differs around the world. In Namibia it is mandatory. In Sweden most companies have a post-office box address.

Trying to compare data with these different concepts is like comparing apples and oranges, which often goes bananas.

56.085053

12.439756

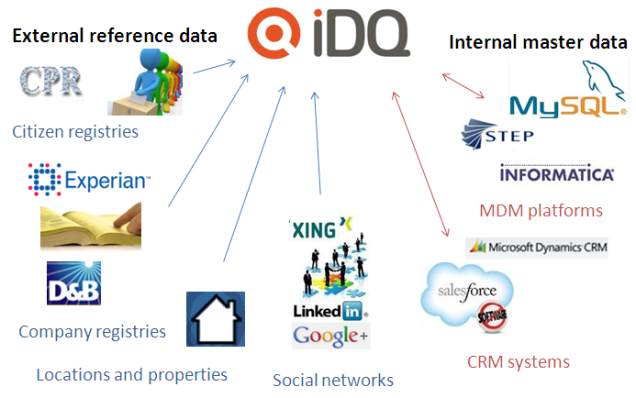

As I have had a long time experience with data matching services around the Dun & Bradstreet WorldBase, it was good to see a presentation yesterday in Stockholm featuring D&B Europe’s new cloud based data manager service.

As I have had a long time experience with data matching services around the Dun & Bradstreet WorldBase, it was good to see a presentation yesterday in Stockholm featuring D&B Europe’s new cloud based data manager service.