255 is one source of truth about how many countries we have on this planet. Even with this modest list of reference data there are several sources of the truth. Another list may have 262 entries and a third list 240 entries.

As I have made a blog post some years ago called 55 reasons to improve data quality I think 255 fits nice in the title of this post.

As I have made a blog post some years ago called 55 reasons to improve data quality I think 255 fits nice in the title of this post.

The 55 reasons to improve data quality in the former post revolves around name and address uniqueness. In the quest for having uniqueness, and fulfilling other data quality dimensions as completeness and timeliness, a have often advocated for using deep (or big) reference data sources as address directories, business directories and consumer/citizen directories.

Doing so in the best of breed way involves dealing with a huge number of reference data sources. Services claimed to have worldwide coverage often falls a bit short compared to local services using local reference sources.

For example when I lived in Denmark, at tiny place in one corner of the world, I was often amazed how address correction services from abroad only had (sometimes outdated) street level coverage, while local reference data sources provides building number and even suite level validation.

Another example was discussed in the post The Art in Data Matching where the multi-lingual capacities needed to do well in Belgium was stressed in the comments.

Every country has its own special requirement for getting name and address data quality right, the data quality dimensions for reference data are different and governments has found 255 (or so) different solutions to balancing privacy and administrative effectiveness.

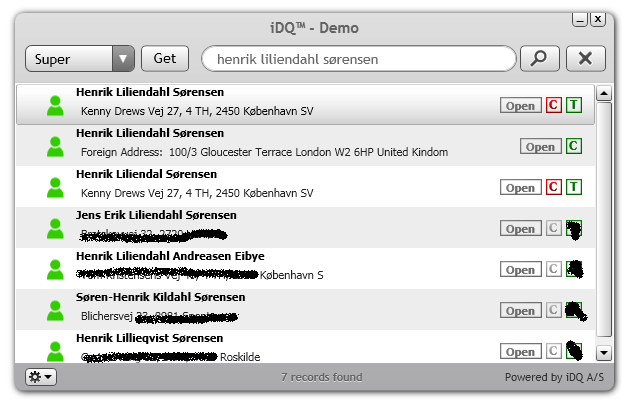

Right now I’m working on internationalization and internationalisation of a data and software service called instant Data Quality. This service makes big reference data from all over the world available in a single mashup. For that we need at least 255 partners.