The data governance discipline, the data quality discipline and the Master Data Management (MDM) discipline are closely related and happens to be my fields of work.

Data quality improvement is important within data governance and MDM. Furthermore you seldom see an MDM implementation without a (master) data governance work stream today.

Over time it has often been suggested that data quality should rightfully be named information quality as told in the post New Blog Name. In addition, data governance could be referred to as information governance as suggested in the Mike2 Open Methodology here.

Within MDM we have the term Product Information Management (PIM) which is partly, but maybe not fully, the same as Product MDM, as examined by Monica McDonnell of Informatica in the post PIM is Not Product MDM – Product MDM is not PIM.

Product is one of several domains within MDM, where customer (or rather party), location and asset are other domains going into multi-domain MDM as reported in the post Multi-Entity MDM vs Multidomain MDM.

While replacing the term data with the term information for data quality, data governance and for that matter (multi-domain) master data management has had limited success outside academic circles, I do see it very suitable for being part of a term covering these three disciplines as a whole.

So what should these three disciplines be called as a whole? Have you noticed any good terms or smart hypes out there? Or are they just three out of more disciplines within data or information management?

As data governance still is an emerging discipline the available resources are of that nature too. There are plenty of good and insightful articles, blog posts and other pieces of information around. But when you try to put them together to work in a data governance journey, the recommendations may point in a lot of different directions.

As data governance still is an emerging discipline the available resources are of that nature too. There are plenty of good and insightful articles, blog posts and other pieces of information around. But when you try to put them together to work in a data governance journey, the recommendations may point in a lot of different directions. I think we have used the term data quality much longer than we have used the term data governance. Before data governance became a popular term organizations did make data quality programs without doing something called data governance. However, doing something about data quality is an act of data governance just maybe without some of the formalized things we just recently have put under the umbrella called data governance.

I think we have used the term data quality much longer than we have used the term data governance. Before data governance became a popular term organizations did make data quality programs without doing something called data governance. However, doing something about data quality is an act of data governance just maybe without some of the formalized things we just recently have put under the umbrella called data governance. During the two days a lot of ideas for how to exploit open public sector data within the private sector were put on the table. I was so lucky to win a SmartWatch as being part of the group with the winning concept that is a service for identifying buildings with potential for energy saving improvements. This service will be of benefit for both large enterprises as building material manufacturers (and in fact energy suppliers), local small and midsize businesses, the house owners and the society as a whole in order to fulfil climate change prevention goals.

During the two days a lot of ideas for how to exploit open public sector data within the private sector were put on the table. I was so lucky to win a SmartWatch as being part of the group with the winning concept that is a service for identifying buildings with potential for energy saving improvements. This service will be of benefit for both large enterprises as building material manufacturers (and in fact energy suppliers), local small and midsize businesses, the house owners and the society as a whole in order to fulfil climate change prevention goals. The post refers to a report by Sunil Soares. In this report data governance tools are seen as tools related to six areas within enterprise data management: Data discovery, data quality, business glossary, metadata, information policy management and reference data management.

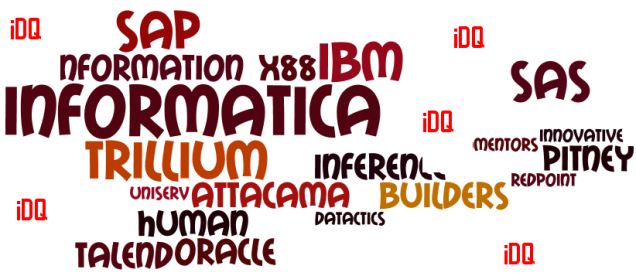

The post refers to a report by Sunil Soares. In this report data governance tools are seen as tools related to six areas within enterprise data management: Data discovery, data quality, business glossary, metadata, information policy management and reference data management.