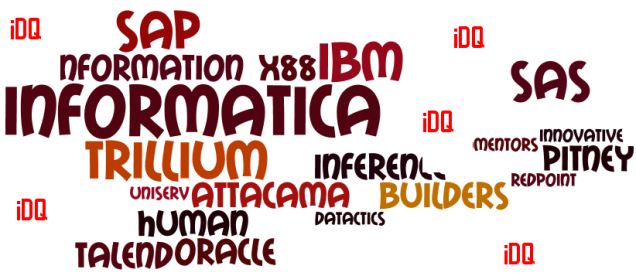

The Gartner Magic Quadrant for Data Quality Tools 2013 is out. If you don’t want to pay Gartner’s fee for having a look, you can sign up for a free copy on one of the vendor’s websites for example here at Trillium Software Insights.

So, what’s new this year?

It is pretty much the same picture as last year with X88 as the only new intruder. Else the news is that some vendors “now appear under slightly different names”. And now Ted Friedman is the only author.

The most exciting part, in my eyes, is the words about how the market will develop. Some seen and foreseen trends are:

- Information governance programs drive the need for data quality tools.

- Cloud based deployments are gaining traction.

- Growth expected for embracing less-structured data, not at least social data, by using big data techniques and sources.

That’s good news.