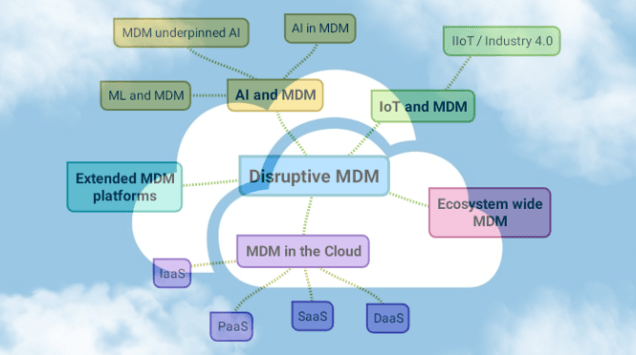

A recent post on this blog was called Five Disruptive MDM Trends. One of the trends mentioned herein is MDM in the cloud and one form of Master Data Management in the cloud in the picture is Data as a Service (DaaS).

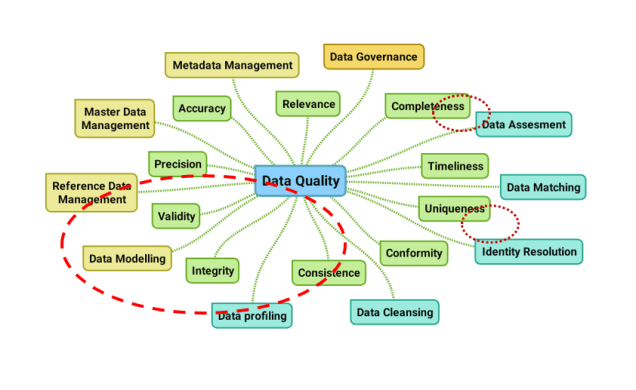

Using Data as a Service in the cloud within MDM solutions is a great way of ensuring data quality. You have access to real-time validation and enrichment of master data and you can also use third party and second party services in the on-boarding processes and then avoid typing in data with the unavoidable human errors that else is the most common root cause of data quality issues.

Some of the most common data services useful in MDM are:

Address Verification and Geocoding

When handling location data having a valid and standardized description of postal addresses and in many cases also a code that tells about the geographic position is crucial in MDM.

Postal address verification can either be exploited by a global service such as Loqate from GB Group or AddressDoctor, which is part of the Informatica offering. Alternatively, you can use national services that are better (but also narrowly) aligned with a given address format within a country and the specific extra services available in some countries.

Geocodes can either by latitude and longitude or flat map friendly geocoding systems such as UTM coordinates or WGS84 coordinates.

Business Directory Services

When handling party master data as B2B customers, suppliers and other business partners in is useful to validate and enrich the data with third party reference data and in some cases even onboard through these sources.

Again, there are global and local options. The most commonly used global is Dun & Bradstreet, who operates a database called WorldBase that holds business entities from all over the world in a uniform format and also provides data about the company family trees on a global basis. Alternatively, many countries have a national service provided by each government with formats and data elements specific to that country.

Citizen Directory Services

When handling party master data as B2C customers, employees and other personal data the third-party possibilities are sparser in general, naturally because of privacy concerns.

In Scandinavia, where I live, these data are available from public sources based on either our national ID or a correct name and address.

Data pools and Product Data Lake

When handling product master data and product information there are for some product groups and product attributes in some geographies data pools available. The most commonly used global service is GDSN from GS1.

Alternatively (or supplementary), for all other product groups, product attributes and digital assets and in all other geographies, you can use a service like the one I am working with and is called Product Data Lake.