Achieving a Single Customer View (SCV) is a core driver for many data quality improvement and Master Data Management (MDM) implementations.

As most data quality practitioners will agree, the best way of securing data quality is getting it right the first time. The same is true about achieving a Single Customer View. Get it right the first time. Have an instant Single Customer View.

The cloud based solution I’m working with right now does this by:

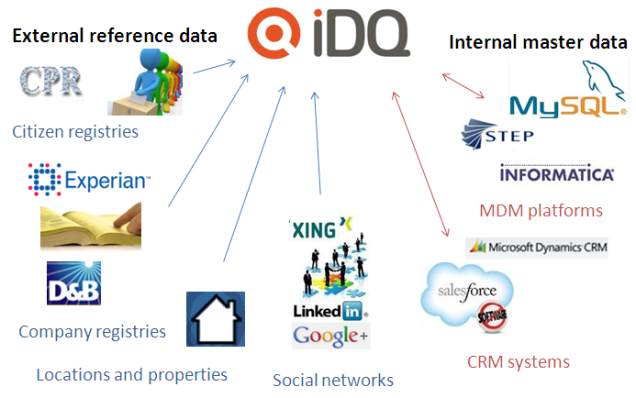

- Searching external big reference data sources with information about individuals, companies, locations and properties as well as social networks

- Searching internal master data with information already known inside the enterprise

- Inserting really new entities or updating current entities by picking as much data as possible from external sources

Some essential capabilities in doing this are:

- Searching is error tolerant so you will find entities even if the spelling is different

- The receiving data model is real world aligned. This includes:

- Party information and location information have separate lives as explained in the post called A Place in Time

- You may have multiple means of contact attached like many phones, email addresses and social identities

How do you achieve a Single Customer View?