In the post Five Flavors of Big Data the last flavor mentioned is “big reference data”.

The typical example of a reference data set is a country table. This is of course a very small data set with around 250 entities. But even that can be complicated as told in the post The Country List.

Reference data can be much bigger. Some flavors of big reference data are:

- Third-party data sources

- Open government data

- Crowd sourced open reference data

- Social networks

Third-party data sources:

The use of third-part data within Master Data Management is discussed in the post Third-Party Data and MDM. These data may also have a more wide use within the enterprise not at least within business intelligence.

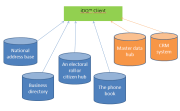

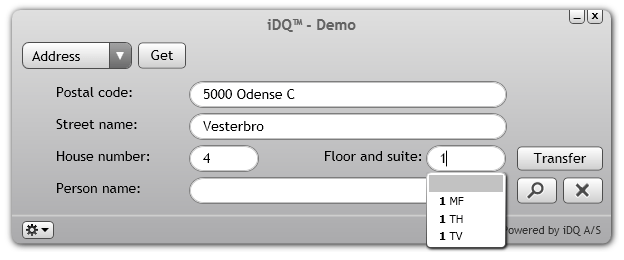

Examples of such data sets are business directories, where the Dun & Bradstreet World Base as probably the best known one today counts over 200 million business entities from all over the world. Another example is address and property directories.

Open government data

The above mentioned directories are often built on top of public sector data which are becoming more and more open around the world. So an alternative is digging directly into the government data.

Crowd sourced open reference data

There are plenty of initiatives around where directories similar to the commercial and government directories are collected by crowd-sourcing and shared openly.

Social networks

In social networks profile data are maintained by the entities in question themselves which is a great advantage in terms of timeliness of data.

If you are in London please join the TDWI UK and IRM UK complimentary London meet-up on big data on the 19th February 2014 where I will elaborate on the four flavors of big reference data.