Previous years close to Christmas posts on this blog has been about Multi-Domain MDM, Santa Style and Data Governance, Santa Style.

So this year it may be the time to have a closer look at big data quality, Santa style, meaning how we can imagine Santa Claus is joining the raise of big data while observing that exploiting data, big or small, is only going to add real value if you believe in data quality. Ho ho ho.

So this year it may be the time to have a closer look at big data quality, Santa style, meaning how we can imagine Santa Claus is joining the raise of big data while observing that exploiting data, big or small, is only going to add real value if you believe in data quality. Ho ho ho.

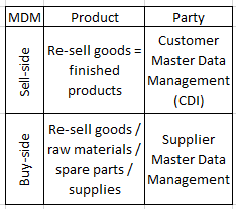

At the Santa Claus organization they have figured out, that there is a close connection between excellence in working with big data and excellence in multi-domain Master Data Management (MDM) and data governance.

Here are some of the findings in the big data paper that the Chief Data Elf just signed off:

- The feasibility of the new algorithms for naughty or nice marking using social media listening combined with our historical records is heavily dependent on unique, accurate and timely boys and girls master data. The party data governance elf gathering will be accountable for any nasty and noisy issues.

- Implementation of the automated present buying service based on fuzzy matching between our supplier self-service based multi-lingual product catalogue and the wish list data lake must be done in a phased schedule. The product data governance elf committee are responsible for avoiding any false positives (wrong present incidents) and decreasing the number of false negatives (someone not getting what could be purchaed within the budget).

- Last year we had and an 12.25 % overspend on reindeers due to incorrect and missing chimney positions. This year the reliance on crowdsourced positions will be better balanced with utilizing open government property data where possible. The location data governance elves will consult with the elves living on the roof at each head of state in order make them release more and better quality of any such data (the Gangnam Project).

But, have any new trends showed up in the past years?

But, have any new trends showed up in the past years? From own experience in working predominantly with product master data during the last couple of years there are issues and big pain points with product data. They are just different from the main pain points with party master data as examined in the post

From own experience in working predominantly with product master data during the last couple of years there are issues and big pain points with product data. They are just different from the main pain points with party master data as examined in the post