The term “Subscriber Data Management” with SDM as the TLA is the industry flavor in the telecommunication sector of the general term “Customer Data Management”.

Recently Teresa Cottam, research director of Telesperience, made a good introduction to the subject in an interview on DataQualityPro.com.

As we have a term as “Customer Master Data Management” we will then also have a term as “Subscriber Master Data Management”.

Based on my experience with phone companies “Subscriber Master Data Management” will be very much about (better) handling the subscriber’s life circle.

These are probably the five most important moments in a subscriber’s life circle(s):

A lead is born

A lead is born- Engaging a prospect

- One more subscriber

- Churn happens

- Win-Back happiness

A lead is born

One of the most important things to do when capturing the data at this point is ensuring if you already have the person/business behind the subscriber somewhere in the life circle or maybe even in other party roles as examined in the post 360° Business Partner View.

Engaging a prospect

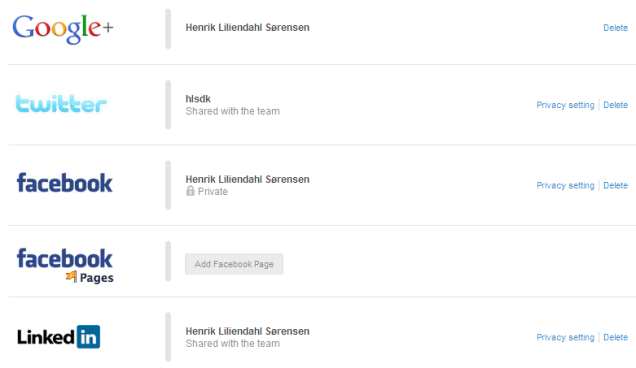

Much of the information prospects are asked about already exist somewhere in the cloud. Why not take advantage of these rich sources as described in Reference Data at Work in the Cloud. By doing that you will have fewer keystrokes and a much better chance of getting it right the first time.

One more subscriber

After a successful sales process a new subscriber can be added to the subscriber list often with more data being captured as adding a billing address and stating credit risk as credit limit and terms of payment.

This is the point where many party entities are split into data silos. Maybe the current subscriber master data lives on in sales oriented systems while new subscriber data are reentered and enriched in an ERP system and other business applications.

Keeping these data silos aligned is the master data challenge as discussed in the post Boiling Data Silos.

Churn happens

A churn is often seen as the termination of a given subscription. But did the person/business behind the subscription really quit or is the service still covered by other subscriptions by the same person, by the household or within a company family tree?

Isn’t the person among us anymore or did a business dissolve?

Such questions can be answered better if you are practicing Ongoing Data Maintenance

Win-Back happiness

If a person or business really did quit, but then comes back, then be sure to build on the data from the first engagement and not start from scratch again capturing master data and history. Avoiding this covers up for some of the 55 reasons to improve data quality related to party master data uniqueness.

56.085053

12.439756