Yesterday there where some blog posts dealing with data silos.

Graham Rhind posted: Data silos – learn to live with them.

Rob Karel posted: Stop trying to put a monetary value on data – it’s the wrong path. Though not being the main subject there was a remark saying: “Attempting to boil the ocean and trying to solve Customer, Product, or Financial data for all processes and decisions across the whole organization is too big an effort destined to fail before it starts”.

Mark Montgomery made a comment on Rob’s post saying: “I also have trouble with the boil the ocean metaphor, which is used too often these days to justify all kinds of protectionist policies in the enterprise. You can’t have it both ways in the enterprise– either you have data silos or you don’t, and I argue that increasingly the world cannot afford them, albeit in highly secure formats in most situations”.

I guess we have to go for the golden mean on this one also. We shouldn’t accept data silos but we must expect them. We could go for eliminating them probably not in one big bang but slice by slice as we climb up the levels in an information maturity model.

I guess we have to go for the golden mean on this one also. We shouldn’t accept data silos but we must expect them. We could go for eliminating them probably not in one big bang but slice by slice as we climb up the levels in an information maturity model.

I would definitely expect to see fewer and smaller data silos at the top level of an information maturity model than on a bottom level of a data quality immaturity model.

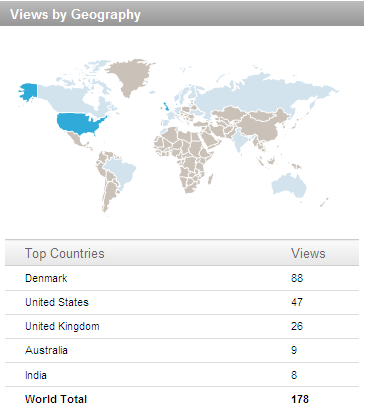

A comment on my last blog post

A comment on my last blog post