Master Data is the core entities that describe the ongoing activities in an organization being:

- Business partners (who)

- Products (what)

- Locations (where)

- Timetables (when)

Many Master Data Management and Data Quality initiatives is in first place only focused on a single entity type, but sooner or later you are faced with dealing with all entity types and the data quality issues that arises from combining data from each entity type.

In my experience business partner data quality issues are in many ways similar cross all different industry verticals while product master data challenges may be different in many ways when comparing companies in various industry verticals. The importance of location data quality is very different, so are the questions about timetable data quality.

A journey in a multi-entity master data world

My latest experience in multi-entity master data quality comes from public transportation.

My latest experience in multi-entity master data quality comes from public transportation.

The most frequent business partner role here is of course the passengers. By the way (so to speak): A passenger may be a direct customer but the payer may also be someone else. But it doesn’t really change anything with the need for data quality whether the passenger is defined as a customer or not, you will regardless of that have to solve problems with uniqueness and real world alignment.

The product sold to a passenger is in the first place a travel document like a single ticket or an electronic card holding a season pass. But the service worth something for the passenger is a ride from point A to point B, which in many cases is delivered as a trip consisting of a series of rides from point A via point C (and D…) to point B. Having consistent hierarchies in reference data is a must when making data fit for multiple purposes of use in disciplines as fare collection, scheduling and so on.

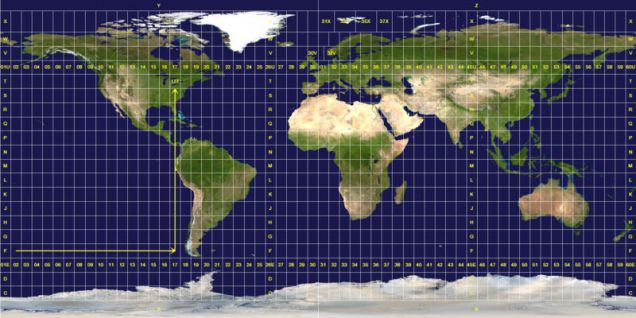

Locations are mainly stop points including those at the start and end of the rides. These are identified both by a name and by geocoding – either as latitude and longitude on a round globe or by coordinates in a flat representation suitable for a map (on a screen). The distance between stops is important for grouping stops in areas suitable for interchange, e.g. bus stops on each side of a road or bus stops and platforms at a rail station. Working with the precision dimension of data quality is a key to accuracy here.

Timetables changes over time. It is essential to keep track of timetable validity in offline flyers, websites with passenger information, back office systems and on-board bus computers. Timeliness is as ever vital here.

Matching transactions made by drivers and passengers in numerous on-board computers, by employees in back office systems and coming from external sources with the various master data entities that describes the transaction correctly is paramount in an effective daily operation and the foundation for exploiting the data in order to make the right decisions for future services.

55.580294

12.282991

The business case is within public transit. In this particular solution passengers are using chip cards when boarding busses, but are not using the cards when alighting. This is a cheaper and smoother solution than the alternative in electronic ticketing, where you have both check-in and check-out. But a major drawback is the missing information about where passengers alighted, which is very useful information in business intelligence.

The business case is within public transit. In this particular solution passengers are using chip cards when boarding busses, but are not using the cards when alighting. This is a cheaper and smoother solution than the alternative in electronic ticketing, where you have both check-in and check-out. But a major drawback is the missing information about where passengers alighted, which is very useful information in business intelligence.