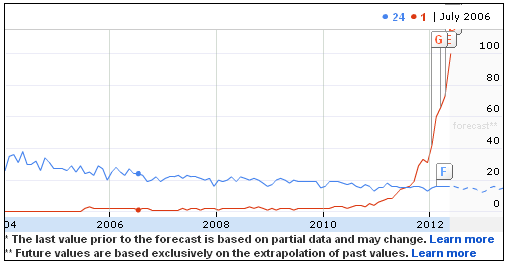

Two years ago I made a blog post about how 5 billion Euros were lost due to bad identity resolution at European authorities. The post was called Big Time ROI in Identity Resolution.

Two years ago I made a blog post about how 5 billion Euros were lost due to bad identity resolution at European authorities. The post was called Big Time ROI in Identity Resolution.

In the carbon trade scam criminals were able to trick authorities with fraudulent names and addresses.

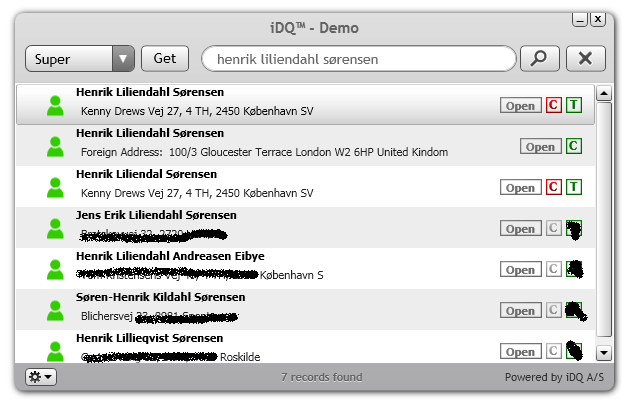

One way of possible discovery of the fraudster’s pattern of interrelated names and physical and digital locations was, as explained in the post, to have used an “off the shelf” data matching tool in order to achieve what is sometimes called non-obvious relationship awareness. When examining the data I used the Omikron Data Quality Center.

Another and more proactive way would have been upstream prevention by screening identity at data capture.

Identity checking may be a lot of work you don’t want to include in business processes with high volume of master data capture, and not at least screening the identity of companies and individuals on foreign addresses seems a daunting task.

One way to help with overcoming the time used on identity screening covering many countries is using a service that embraces many data sources from many countries at the same time. A core technology in doing so is cloud service brokerage. Here your IT department only has to deal with one interface opposite to having to find, test and maintain hundreds of different cloud services for getting the right data available in business processes.

Right now I’m working with such a solution called instant Data Quality (iDQ).

Really hope there’s more organisations and organizations out there wanting to avoid losing 5 billion Euros, Pounds, Dollars, Rupees, Whatever or even a little bit less.