Reference Data is a term often used either instead of Master Data or as related to Master Data. Reference data is those data defined and (initially) maintained outside a single organisation. Examples from the party master data realm are a country list, a list of states in a given country or postal code tables for countries around the world.

Reference Data is a term often used either instead of Master Data or as related to Master Data. Reference data is those data defined and (initially) maintained outside a single organisation. Examples from the party master data realm are a country list, a list of states in a given country or postal code tables for countries around the world.

The trend is that organisations seek to benefit from having reference data in more depth than those often modest populated lists mentioned above.

In the party master data realm such reference data may be core data about:

- Addresses being every single valid address typically within a given country.

- Business entities being every single business entity occupying an address in a given country.

- Consumers (or Citizens) being every single person living on an address in a given country.

There is often no single source of truth for such data. Some of the challenges I have met for each type of data are:

Addresses

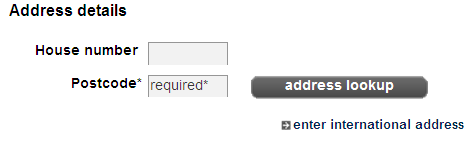

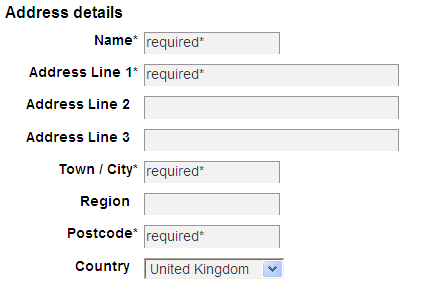

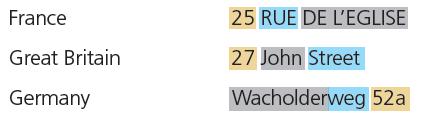

The depth (or precision if you like) of an address is a common problem. If the depth of address data is at the level of building numbers on streets (thoroughfares) or blocks, you have issues as described in the blog post called Multi-Occupancy.

Address reference data of course have issues with the common data quality dimensions as:

- Timeliness, because for example new addresses will exist in the real world but not yet in a given address directory.

- Accuracy, as you are always amazed when comparing two official sources which should have the same elements, but haven’t.

Business Entities

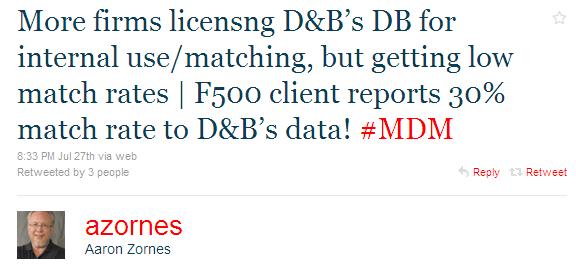

Business directories have been accessible for many years and are often used when handling business-to-business (B2B) customer master data and supplier master data management. Some hurdles in doing this are:

- Uniqueness, as your view of what a given business entity is occasionally don’t match the view in the business directory as discussed in the post 3 out of 10

- Conformity, because for example an apparently simple exercise as assigning an industry vertical can be a complex matter as mentioned in the post What are they doing?

Consumers (or Citizens)

In business-to-consumer (B2C) or other activities involving citizens a huge challenge is identifying the individuals living on this planet as pondered in the post Create Table Homo Sapiens. Some troubles are:

- Consistency isn’t easy, as governments around the world have found 240 (or so) different solutions to balancing privacy concerns and administrative effectiveness.

- Completeness, as the rules and traditions not only between countries, but also within different industries, certain activities and various channels, are different.

Big Reference Data as a Service

Even though I have emphasized on some data quality dimensions for each type of data, all dimensions apply to all types of data.

For organisations operating multinational and/or multichannel exploiting the wealth and diversity of external reference data is a daunting task.

This is why I see reference data as a service embracing many sources as a good opportunity for getting data quality right the first time. There is more on this subject in the post Reference Data at Work in the Cloud.