A common technique used when assessing data quality is data profiling. For example you may count different measures as number of fields in a table that have null values or blank values, distribution of filled length of a certain field, average values, highest values, lowest values and so on.

If we look at the most prominent entity types in master data management being customers and products you may certainly also profile your customer tables and product tables and indeed many data profiling tutorials use these common sort of tables as examples.

However, in real life profiling an entire customer table or product table will often be quite meaningless. You need to dig into the hierarchies in these data domains to get meaningful measures for your data quality assessment.

However, in real life profiling an entire customer table or product table will often be quite meaningless. You need to dig into the hierarchies in these data domains to get meaningful measures for your data quality assessment.

Customer master data

In profiling customer master data you must consider the different types of party master data as business entities, department entities, consumer entities and contact entities, as the demands for completeness will be different for each type. If your raw data don’t have a solid categorization in place, a prerequisite for data profiling will often be to make such a categorization before going any further.

If your customer data model isn’t too simple, as explained in post A Place in Time, your location data (like shipping addresses, billing addresses, visiting addresses) will be separated from your customer naming and identification data. This hierarchical structure must be considered in your data profiling.

For international customer data there will also be different demands and possibilities for completeness of customer data elements.

Depending on your industry and way of doing business there may also be different demands for customer data related to different industry verticals, demographic groups and data sourced in different channels. However this may be a slippery ground, as current and not at least future requirements for multiple uses of the same master data may change the picture.

Product master data

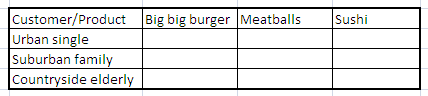

For most businesses the requirements for completeness and other data profiling measures will be very different depending on the product type.

Some requirements will only apply to a small range of products; other requirements apply to a broader range of products.

All in all the data profiling requirements is an integrated part of hierarchy management for product master data which make a very strong case for having data profiling capabilities implemented as part of a product information management (PIM) solution.

Multi-Domain Master Data Management

For master data management solutions embracing both customer data integration (CDI) and product information management (PIM) integrated capabilities for profiling customer master data, location master data and product master data as part of hierarchy management makes a lot of sense.

As improving data quality isn’t a one-off activity but a continuous program, so is the part being measuring the completeness of your master data of any kind.

56.085053

12.439756

That is what happened at Google Maps. They introduced a typo so the name of the city on the map now is “Aahrus” – swapping the r and the h in the middle of the name.

That is what happened at Google Maps. They introduced a typo so the name of the city on the map now is “Aahrus” – swapping the r and the h in the middle of the name.