Today it was announced that IBM is to acquire Netezza, a data warehouse appliance vendor.

5 years ago I guess the interest for data warehouse appliances was very sparse. I guess this because I attended a session held by Netezza at the 2005 London Information Management conference. We were 3 people in the room: The presenter, a truly interested delegate and me. I was basically in the room because I was the next speaker in the room and wanted to see how things worked out. For the record: It was a good session, I learned a lot about appliances.

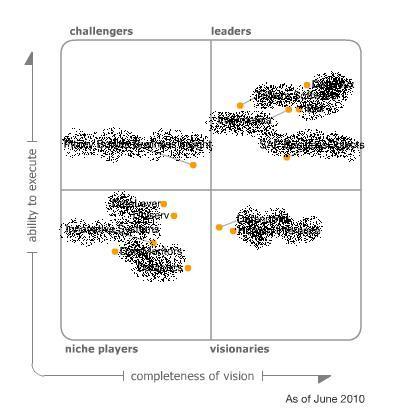

Probably therefore I noticed a piece from 2007 where Philip Howard of Bloor wrote about The scope for appliances. In this article Phillip Howard also suggested other types of appliances, for example a data quality (data matching) appliance.

Probably therefore I noticed a piece from 2007 where Philip Howard of Bloor wrote about The scope for appliances. In this article Phillip Howard also suggested other types of appliances, for example a data quality (data matching) appliance.

I have been around some implementations where we could use the power of an appliance when we have to match a lot of rows. The Achilles’ heel in data matching is candidate selection and often you have to restrict on your methods in order to maintain a reasonable performance.

But I wonder if I ever will see an on promise data quality (data matching) appliance or it will be placed in the cloud. Or maybe there already is one out there? If so, please tell about it.