I’ve just investigated a suspicious customer data match:

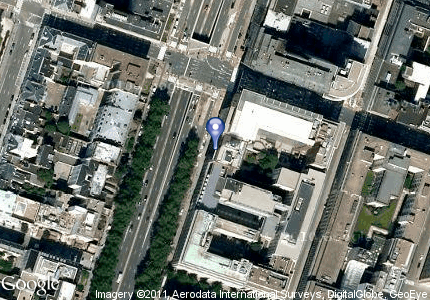

A Company on Kunstlaan no 99 in Brussel

was matched with high confidence with:

The Company on Avenue des Arts no 99 in Bruxelles

At first glance it perhaps didn’t look as a confident match, but I guess the computer is right.

The diverse facts are:

- Brussels is the Belgian capital

- Belgium has two languages: French and Flemish (a variant of Dutch)

- Some parts of the country is French, some parts is Flemish and the capital is both

- Brussels is Bruxelles in French and Brussel in Flemish

- Kunst is Flemish meaning Art (as in Dutch, German and Scandinavian too)

- Laan is Flemish meaning Avenue (same origin as Lane I guess)

- Avenue des Arts is French meaning Avenue of Art (French is easy)

Technically the computer in this case did as follows:

- Compared the names like “A Company” and “The Company” and found a close edit distance between the two names.

- Remembered from some earlier occasions that “Kunstlaan” and “Avenue des Arts” was accepted as a match.

- Remembered from numerous earlier occasions that “Brussel”(or “Brüssel) and “Bruxelles” was accepted as a match.

It may also have been told beforehand that “Kunstlaan” and “Avenue des Art” are two names of the same street in some Belgian address reference data which I guess is a must when doing heavy data matching on the Belgian market.

In this case it was a global match environment not equipped with worldwide address reference data, so luckily the probabilistic learning element in the computer program saved the day.