Currently I’m travelling a lot between my present home in London, United Kingdom and Copenhagen, Denmark where I have most of my family and where the iDQ headquarter is.

When flying between London and Copenhagen you pass the southern North Sea. In the old days (8,000 years ago) this area was a land occupied by human beings. This ancient land is known today as Doggerland.

Sometimes I feel like a citizen of Doggerland not really belonging in the United Kingdom or Denmark.

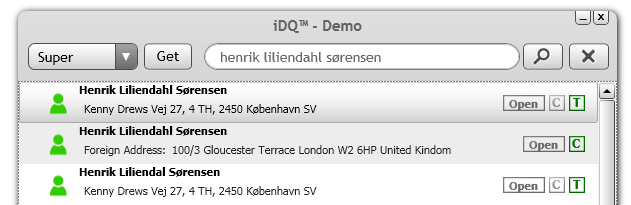

I still have some phone subscriptions in Denmark I use there and my family are using there. The phone company seems to have a hard time getting a 360 degree customer view as I have two different spellings of my name and two different addresses as seen on the screen when I look up myself in the iDQ service:

Besides having a Customer Relationship Mess (CRM) the phone company has recently shifted their outsourcing partner (from CSC to TCS). This has caused a lot of additional mess, apparently also closing one of my subscriptions due to that they have failed to register my payments. They did however send a chaser they say, but to the oldest of the addresses where I don’t pick up mail anymore.

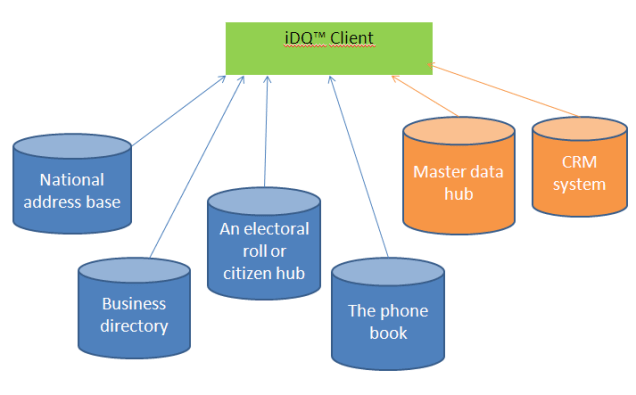

I called to settle the matter and asked if they could correct the address not in use anymore. They couldn’t. The operator did some kind of query into the citizen hub similar to what I can do on iDQ:

However the customer service guy’s screen just showed that I have no address in Denmark in the citizen hub (called CPR), so he couldn’t change the address.

Apparently the phone company have correctly picked up an accurate address in the citizen hub when I got the subscription but failed to update it (along with the other subscriptions) when I moved to another domestic address and now don’t have an adequate business rule when I’m registered at a foreign address.

So now I’m staying in Doggerland.