Analogies between making and serving good food and improving data and information quality are among the recurring topics on this blog. Like the term good food is a subjective matter also good information is a subjective matter though the ones who have the task of preparing both knows that fresh and clean raw materials / data is a must for preparing both, as explained in the post Bon Appetit.

Analogies between making and serving good food and improving data and information quality are among the recurring topics on this blog. Like the term good food is a subjective matter also good information is a subjective matter though the ones who have the task of preparing both knows that fresh and clean raw materials / data is a must for preparing both, as explained in the post Bon Appetit.

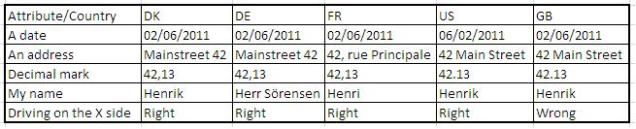

Food preferences and data and information preferences differs around the world. High esteemed local dishes from one country may not have the same traction in other parts of the world. As discussed in the post Data Quality and World Food this is also true for data and information quality.

In the post Metadata Meatballs it is examined how the same diversity applies to metadata.

Sometimes you can’t trust data even if data is captured correctly. If you for example ask people about food consumption habits we tend to give answers with some distance from reality. That calls for a Survey Data Laundering.

Estimating the return on investment for improving data quality has always been hard. The post Miracle Food for Thought is about how that resembles how following “good” advices around what you should eat and drink isn’t as simple as often stated.

Anyway, we all know that better food and better serving in a restaurant does create more business and sometimes we have to put the restaurant and the information bistro Under new Master Data Management.

And finally, tomorrow this blog is two years old. That calls for a Birthday Party in the cloud.