Data governance is 80 % about people and processes and 20 % (if not less) about technology is a common statement in the data management realm.

This blog post is about the 20 % (or less) technology part of data governance.

The term proactive data governance is often used to describe if a given technology platform is able to support data governance in a good way.

So, what is proactive data governance technology?

Obviously it must be the opposite of reactive data governance technology which must be something about discovering completeness issues like in data profiling and fixing uniqueness issues like in data matching.

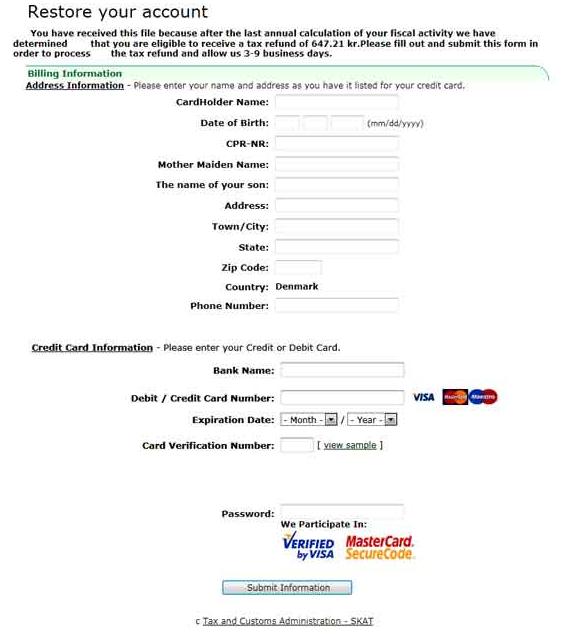

Proactive data governance technology must be implemented in data entry and other data capture functionality. The purpose of the technology is to assist people responsible for data capture in getting the data quality right from the start.

Proactive data governance technology must be implemented in data entry and other data capture functionality. The purpose of the technology is to assist people responsible for data capture in getting the data quality right from the start.

If we look at master data management (MDM) platforms we have two possible ways of getting data into the master data hub:

- Data entry directly in the master data hub

- Data integration by data feed from other systems as CRM, SCM and ERP solutions and from external partners

In the first case the proactive data governance technology is a part of the MDM platform often implemented as workflows with assistance, checks, controls and permission management. We see this most often related to product information management (PIM) and in business-to-business (B2B) customer master data management. Here the insertion of a master data entity like a product, a supplier or B2B customer involves many different employees each with responsibilities for a set of attributes.

The second case is most often seen in customer data integration (CDI) involving business-to-consumer (B2C) records, but certainly also applies to enriching product master data, supplier master data and B2B customer master data. Here the proactive data governance technology is implemented in the data import functionality or even in the systems of entry best done as Service Oriented Architecture (SOA) components that are hooked into the master data hub as well.

The second case is most often seen in customer data integration (CDI) involving business-to-consumer (B2C) records, but certainly also applies to enriching product master data, supplier master data and B2B customer master data. Here the proactive data governance technology is implemented in the data import functionality or even in the systems of entry best done as Service Oriented Architecture (SOA) components that are hooked into the master data hub as well.

It is a matter of taste if we call such technology proactive data governance support or upstream data quality. From what I have seen so far, it does work.