Earlier this week this blog featured the Magic Quadrant for Customer MDM and the Magic Quadrant for Product MDM. Today it is time to have a look at the just published Magic Quadrant for Data Quality Tools.

Last year I wondered if we finally will see that data quality tools will focus on other pain points than duplicates in party data and postal address precision as discussed in the post The Multi-Domain Data Quality Tool Magic Quadrant 2014 is out.

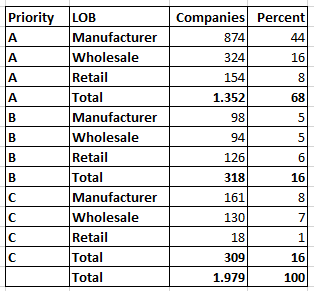

Well, apparently there still isn’t a market for that as the Gartner report states: “Party data (that is, data about existing customers, prospective customers, citizens or patients) remains the top priority for most organizations: Almost nine in 10 (89%) of the reference customers surveyed for this Magic Quadrant consider it a priority, up from 86% in the previous year’s survey.”

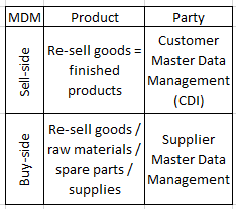

From own experience in working predominantly with product master data during the last couple of years there are issues and big pain points with product data. They are just different from the main pain points with party master data as examined in the post Multi-Domain MDM and Data Quality Dimensions.

From own experience in working predominantly with product master data during the last couple of years there are issues and big pain points with product data. They are just different from the main pain points with party master data as examined in the post Multi-Domain MDM and Data Quality Dimensions.

I sincerely believes that there are opportunities in providing services to solve the specific data quality challenges for product master data, that, according to Gartner, “is one of the most important information assets an organization has; second-only, perhaps, to customer master data”. In all humbleness, my own venture is called the Product Data Lake.

Anyway, as ever, Informatica is our friend when it comes to free copies of a data management quadrant. Get a free copy of the 2015 Magic Quadrant for Data Quality Tools here.