My current “Data Quality 2.0” endeavor started as a spontaneous heading on the topic of where the data quality industry in my opinion are going in the near future. But partly encouraged by being friendly slammed on the buzzword bingo I have surfed the Web 2.0 for finding other 2.0’s. They are plenty and frequent.

This piece by Mehmet Orun called “MDM 2.0: Comprehensive MDM” really caught my interest. Data Quality and MDM (Master Data Management) is closely related. When you do MDM you work much of the time with Data Quality issues, and doing Data Quality is most often doing Master Data Quality.

This piece by Mehmet Orun called “MDM 2.0: Comprehensive MDM” really caught my interest. Data Quality and MDM (Master Data Management) is closely related. When you do MDM you work much of the time with Data Quality issues, and doing Data Quality is most often doing Master Data Quality.

So assuming “Data Quality 2.0” and “MDM 2.0” is about what is referenced in the links above it’s quite natural that many points are shared between the two terms.

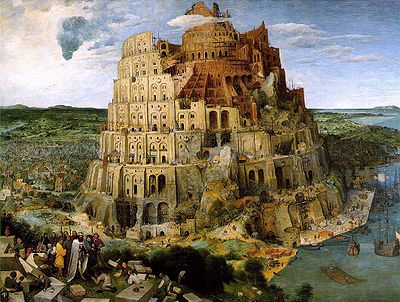

Service Oriented Architecture (SOA) is one of the binding elements as Data Quality solutions and MDM solutions will share Reference and Master Data Management services handling data stewardship, match-link, match-merge, address lookup, address standardization, address verification, data change management by doing Information Discrepancy Resolution Processes embracing internal and external data.

The mega-vendors will certainly bundle their Data Quality and MDM offerings by using more or less SOA. The ongoing vendor consolidation adds to that wave. But hopefully we will also see some true SOA where best-of-bread “Data Quality 2.0” and “MDM 2.0” technology will be implemented with strong business support under a broader solution plan to meet the intended business need by focusing on how the information is created, used, and managed for multiple purposes in a multi-cultural environment.

Actually I should have added a (part 1) to the heading of this post. But I will try to make 2.0 free headings in following posts on the next generation milestones in Data Quality and MDM coexistence. It is possible – I did that in my previous post called Master Data Quality: The When Dimension.

Being a Data Quality professional may be achieved by coming from the business side or the technology side of practice. But more important in my eyes is the question whether you have made serious attempts and succeeded in understanding the side from where you didn’t start.

Being a Data Quality professional may be achieved by coming from the business side or the technology side of practice. But more important in my eyes is the question whether you have made serious attempts and succeeded in understanding the side from where you didn’t start. I have a page on this blog with the heading “

I have a page on this blog with the heading “ During many years of providing solutions for business directory match and tuning these as well as handling such match services from colleagues in the business I have very, very seldom seen a 100% match – even 90% matches are very rare.

During many years of providing solutions for business directory match and tuning these as well as handling such match services from colleagues in the business I have very, very seldom seen a 100% match – even 90% matches are very rare.