Many technical magazines have tests of a range of different similar products like in the IT world comparing a range of CPU’s or a selection of word processors. The tests are comparing measurable things as speed, ability to actually perform a certain task and an important thing as the price.

With enterprise software as data quality tools we only have analyst reports evaluating the tools on far less measurable factors often given a result very equivalent to stating the market strength. The analysts haven’t compared the actual speed; they have not tested the ability to do a certain task nor taken the price into consideration.

With enterprise software as data quality tools we only have analyst reports evaluating the tools on far less measurable factors often given a result very equivalent to stating the market strength. The analysts haven’t compared the actual speed; they have not tested the ability to do a certain task nor taken the price into consideration.

A core feature in most data quality tools is data matching. This is the discipline where data quality tools are able to do something considerable better than if you use more common technology as database managers and spreadsheets, like told in the post about deduplicating with a spreadsheet.

In the LinkedIn data matching group we have on several occasions touched the subject of doing a once and for all benchmark of all data quality tools in the world.

My guess is that this is not going to happen. So, if you want to evaluate data quality tools and data matching is the prominent issue and you don’t just want a beauty contest, then you have to do as the queen in the fairy tale about The Princess and the Pea: Make a test.

Some important differentiators in data matching effectiveness may narrow down the scope for your particular requirements like:

- Are you doing B2C (private names and addresses), B2B (business names and addresses) or both?

- Do you only have domestic data or do you have international data with diversity issues?

- Will you only go for one entity type (like customer or product) or are you going for multi-entity matching?

Making a proper test is not trivial.

Often you start with looking at the positive matches provided by the tool by counting the true positives compared to the false positives. Depending on the purposes you want to see a very low figure for false positives against true positives.

Harder, but at least as important, is looking at the negatives (the not matched ones) as explained in the post 3 out of 10.

Next two features are essential:

- In what degree are you able to tune the match rules preferable in a user friendly way not requiring too much IT expert involvement?

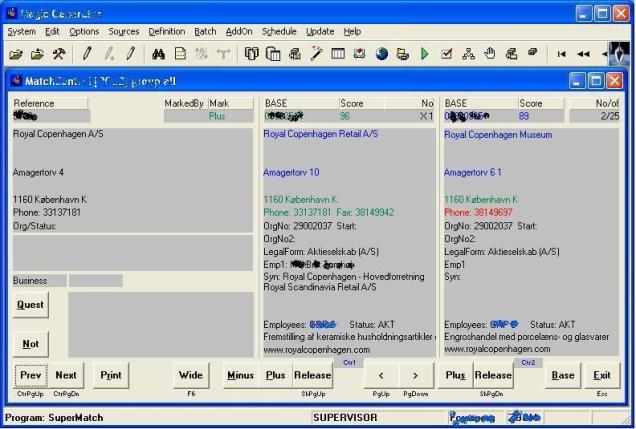

- Are you able to evaluate dubious matches in a speedy and user friendly way as shown in the post called When computer says maybe?

A data matching effort often has two phases:

- An initial match with all current stored data maybe supported by matching with external reference data. Here speed may be important too. Often you have to balance high speed with poor results. Try it.

- Ongoing matching assisting in data entry and keeping up with data coming from outside your jurisdiction. Here using data quality tools acting as service oriented architecture components is a great plus including reusing the rules from the initial match. Has to be tested too.

And oh yes, from my experience with plenty of data quality tool evaluation processes: Price is an issue too. Make sure to count both license costs for all the needed features and consultancy needed experienced from your tests.