Today this blog has been online one year. It’s time for a birthday party.

Today this blog has been online one year. It’s time for a birthday party.

The economy around a birthday party usually goes like this:

- You, the guest, spend some money on a nice birthday present

- I, the host, spend some money on fine food and beverage

Now, a blog is a virtual thing and I reckon that most of my readers live far, far away from the Copenhagen South Coast. So it’s going to be a remote birthday party and as most other things happening in the social media realm actually no money is going to be exchanged.

Anyway, here is what I would have liked to serve in the real world:

The dish I have prepared the most times when we have guests is the Spanish paella. I love paella very much and so do all our polite guests.

Also I am a shrimp addict, so I usually like to add two or three different kind of shrimps as the smaller but extremely tasteful Greenlandic shrimps to delicious giant Thai tiger prawns.

Steak

My second favorite meal is a steak. You probably don’t get a better steak than those originated from cattle grazing on the Argentinean pampas.

My second favorite meal is a steak. You probably don’t get a better steak than those originated from cattle grazing on the Argentinean pampas.

As I live in the Northern Hemisphere it’s summertime now and perfect weather for preparing the steak outside on the grill.

Wine

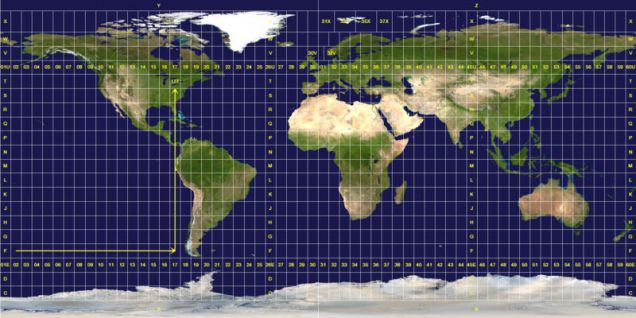

There is so much good wine coming from many places around the world. I like Californian wine, wine from Chile, South African wine, Australian wine, French wine and last but not least Italian wine including the unbeatable Amarone.

There is so much good wine coming from many places around the world. I like Californian wine, wine from Chile, South African wine, Australian wine, French wine and last but not least Italian wine including the unbeatable Amarone.

Beer

As I am a native Dane you will probably expect me to propose a Carlsberg. Don’t get me wrong: Carlsberg is probably a good beer. But there are many other good beers around. When I am in England I like the ultimate mainstream beer: A John Smith (now owned by Dutch Heineken). The best mainstream beer in my opinion is the Belgian Leffe.

As I am a native Dane you will probably expect me to propose a Carlsberg. Don’t get me wrong: Carlsberg is probably a good beer. But there are many other good beers around. When I am in England I like the ultimate mainstream beer: A John Smith (now owned by Dutch Heineken). The best mainstream beer in my opinion is the Belgian Leffe.

Cheers

Thanks to everyone who has read this blog, subscribed, made a re-tweet and not at least those who has commented.