The data quality issues in doing business with private consumers (business-to-consumer = B2C) and doing business with other business’s (business-to-business = B2B) have a lot of similar challenges but also differs in a lot of ways.

Some of my experiences (and thoughts) related to different master data domains are:

Some of my experiences (and thoughts) related to different master data domains are:

Customer master data

In B2C the number of customers, prospects and leads is usually high and characterized by relatively few interactions with each entity. In B2B you usually have a relatively small number of customers with a high number of interactions.

One of the most automated activities in data quality improvement is matching master data records with information about customers. Many of the examples we see in marketing material, research documents, blog posts and so on is about matching in the B2C realm. This is natural since the high number of records typically with a low attached value calls for automation.

Data matching in the B2B realm is indeed more complex due to numerous challenges like less standardized names of companies and typically more options in what constitutes a single customer. The high value attached to each customer also makes the risk of mistakes a showstopper for too much automation.

So in B2B we see an increasing adaption of creating workflows that insures data quality during data capture often by exploiting external reference data which also in general are more available related to business entities.

Location master data

The location of B2C customers means a lot. Accurate and timely delivery addresses for everything from direct mails to bringing goods to the premises are essential. Location data are used to recognize household relations, assigning demographic stereotypes and in many cases calculating fees of different kind. I had a near disaster experience with a really bad address in my early career.

Even though location data for B2B activities theoretically is just as important, I have often seen that a little less precision is fit for purpose or anyway lower prioritized than more pressing issues.

Product master data

Theoretically there should be no difference between B2C and B2B here, but I guess there is in practice?

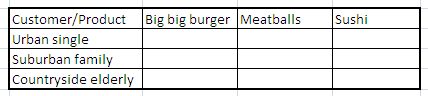

The most interesting aspect is probably the multi-domain aspect examining the relations between customers and products.

I had some experiences some years ago with the B2B realm as described in the post What is Multi-Domain MDM?: 1,000 B2B customers buying 1,000 different finished products can be a quite complicated data quality operation.

Within the B2C realm the most predominant multi-domain data quality issues I have met is related to analytics. As discussed in the post Customer/Product Matrix Management it is about typifying your customers correctly and categorizing your products adequately at the same time.