Now, I am not going to write about the importance of location when selling real estates, but I am going to provide three examples about knowing about the location when you are doing data matching like trying to find duplicates in names and addresses.

Location uniqueness

Let’s say we have these two records:

- Stefani Germanotta, Main Street, Anytown

- Stefani Germanotta, Main Street, Anytown

The data is character by character exactly the same. But:

- There is only a very high probability that it is the same real world individual if there is only one address on Main Street in Anytown.

- If there are only a few addresses on Main Street in Anytown, you will still have a fair probability that this is the same individual.

- But if there are hundreds of addresses on Main Street in Anytown, the probability that this is the same individual will be below threshold for many matching purposes.

Of course, if you are sending a direct marketing letter it is pointless sending both letters, as:

- Either they will be delivered in the same mailbox.

- Or both will be returned by postal service.

So this example highlights a major point in data quality. If you are matching for a single purpose of use like direct marketing you may apply simple processing. But if you are matching for multiple purposes of use like building a master data hub, you don’t avoid some kind of complexity.

So this example highlights a major point in data quality. If you are matching for a single purpose of use like direct marketing you may apply simple processing. But if you are matching for multiple purposes of use like building a master data hub, you don’t avoid some kind of complexity.

Location enrichment

Let’s say we have these two records:

- Alejandro Germanotta, 123 Main Street, Anytown

- Alejandro Germanotta, 123 Main Street, Anytown

If you know that 123 Main Street in Anytown is a single family house there is a high probability that this is the same real world individual.

If you know that 123 Main Street in Anytown is a single family house there is a high probability that this is the same real world individual.

But if you know that 123 Main Street in Anytown is a building used as a nursing home, a campus or that this entrance has many apartments or other kind of units, then it is not so certain that these records represents the same real world individual (not at least if the name is John Smith).

So this example highlights the importance of using external reference data in data matching.

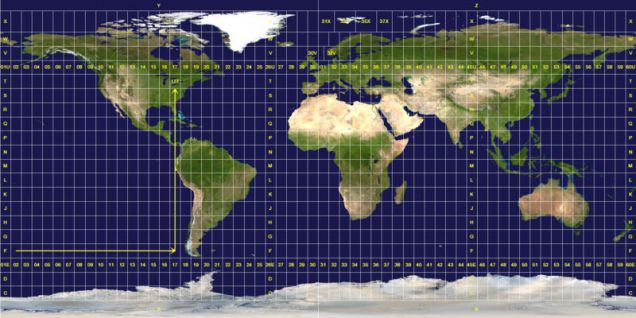

Location geocoding

Let’s say we have these two records:

- Gaga Real Estate, 1 Main Street, Anytown

- L. Gaga Real Estate, Central Square, Anytown

If you match using the street address, the match is not that close.

But if you assigned a geocode for the two addresses, then the two addresses may be very close (just around the corner) and your match will then be pretty confident.

Assigning geocodes usually serve other purposes than data matching. So this example highlights how enhancing your data may have several positive impacts.