The possible connection between the hot buzz within IT today being “big data” and the good old topic of master data management has been discussed a lot lately. An example from CIO UK today is this article called Big data without master data management is a problem.

As said in the article there is a connection through big master data (and big reference data) to big transaction data. Big transaction data is what we usually would call big data, because these are the really big ones.

The two most mentioned kind of big transaction data are:

- Social data and

- Sensor data

I also have seen a lot of connections between these big data and master data in multiple domains.

Social Data

![]() Connecting social data to Master Data Management (MDM) is an ongoing discussion I have been involved in for the last three years lately through the new LinkedIn group called Social MDM.

Connecting social data to Master Data Management (MDM) is an ongoing discussion I have been involved in for the last three years lately through the new LinkedIn group called Social MDM.

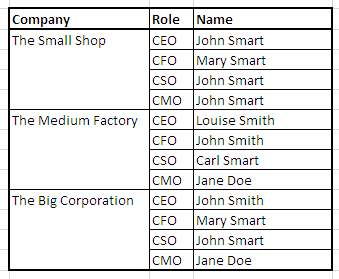

The customer master data domain is in focus here, as the immediate connection here is how to relate traditional systems of record holding customer master data and the systems of engagement where the big social data are waiting to be analyzed and eventually be a part of day-to-day customer centric business processes.

However being able to analyze, monitor and take action on what is being said about specific products in social data is another option and eventually that has to be linked to product master data. In product master data management the focus has traditionally been on your own (resell) products. Effectively listening to social data will mean that you also have to manage data about competing products.

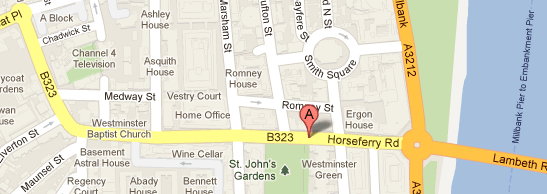

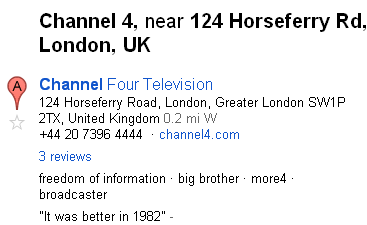

Attaching location to social data has been around for long. Connecting social data to your master data will also require that your location master data are well aligned with the real world.

During the past many years I have been involved in data management within public transportation where we have big data coming in from sensors of different kind.

The big problem has for sure being able to connect these transactions correctly to master data. The challenges here are described in the post Multi-Entity Master Data Quality.

The biggest problem is that all the different equipment generating the sensor data in practice can’t be at the same stage at the same time and this will eventually create data that if related without care will show very wrong information about who was the passenger(s), what kind of trip it were, where the journey happened and under which timetable.