The above question might seem a bit belated after I have blogged about it for 9 months now. But from time to time I ask myself some questions like:

Is Data Quality an independent discipline? If it is, will it continue to be that?

Data Quality is (or should) actually be a part of a lot of other disciplines.

Data Governance as a discipline is probably the best place to include general data quality skills and methodology – or to say all the people and process sides of data quality practice. Data Governance is an emerging discipline with an evolving definition, says Wikipedia. I think there is a pretty good chance that data quality management as a discipline will increasingly be regarded as a core component of data governance.

Master Data Management is a lot about Data Quality, but MDM could be dead already. Just like SOA. In short: I think MDM and SOA will survive getting new life from the semantic web and all the data resources in the cloud. For that MDM and SOA needs Data Quality components. Data Quality 3.0 it is.

You may then replace MDM with CRM, SCM, ERP and so on and here by extend the use of Data Quality components from not only dealing with master data but also transaction data.

Next questions: Is Data Quality tools an independent technology? If it is, will it continue to be that?

It’s clear that Data Quality technology is moving from being stand alone batch processing environments, over embedded modules to, oh yes, SOA components.

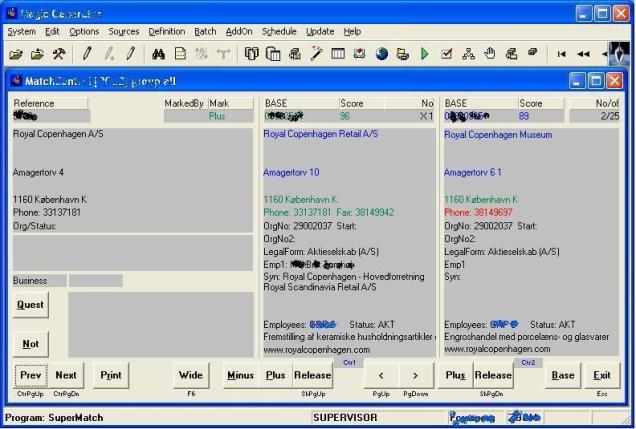

If we look at what data quality tools today actually do, they in fact mostly support you with automation of data profiling and data matching, which is probably only some of the data quality challenges you have.

If we look at what data quality tools today actually do, they in fact mostly support you with automation of data profiling and data matching, which is probably only some of the data quality challenges you have.

In the recent years there has been a lot of consolidation in the market around Data Integration, Master Data Management and Data Quality which certainly is telling that the market need Data Quality technology as components in a bigger scheme along with other capabilities.

But also some new pure Data Quality players are established – and I think I often see some old folks from the acquired entities at these new challengers. So independent Data Quality technology is not dead and don’t seem to want to be that.