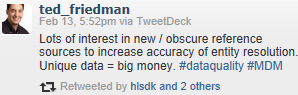

In a recent tweet Ted Friedman of Gartner (the analyst firm) said:

I think he is right.

Duplicates has always been pain number one in most places when it comes to the cost of poor data quality.

Though I have been in the data matching business for many years and been fighting duplicates with dedupliaction tools in numerous battles the war doesn’t seem to be won by using deduplication tools alone as told in the post Somehow Deduplication Won’t Stick.

Eventually deduplication always comes down to entity resolution when you have to decide which results are true positives, which results are useless false positives and wonder how many false negatives you didn’t catch, which means how much money you didn’t have in return of your deduplication investment.

Bringing in new and be that obscure reference sources is in my eyes a very good idea as examined in the post The Good, Better and Best Way of Avoiding Duplicates.