Data Stewardship is performed by data stewards.

What is a Data Steward?

A steward may in a general sense be:

- One employed in a large household or estate to manage domestic concerns – typically an old role.

- An employee on a ship, airplane, bus, or train who attends passengers needs – typically a new role.

My guess is that data stewardship also will tend to be going from the first kind of role related to data to the latter kind role related to data.

The current data steward role is predominately seen as the oversight of the house-holding related to the internal enterprise data assets. It’s about keeping everything there clean and tidy. It involves having routines and rules that ensure that things with data are done properly according to the traditions and culture in the enterprise.

Big Data Stewardship

In the future enterprises will rely much more on external data. Exploiting third party reference data and open government data and digging into big data sources as social data and sensor data will shift the focus from looking mostly into keeping the internal data fit for purposes.

As such you as a data steward will become more like the steward on a ship, airplane, bus or train. Data will come and go. After a nice welcoming smile you will have to carefully explain about the safety procedures. Some data will be fairly easy to handle – mostly just spending the time sleeping. Other data will be demanding asking for this and that and changing its mind shortly after. Some data will be a frequent traveler and some data will be there for the first time.

So, are you ready to attend the next batch of travelling data on board your enterprise?

Great piece Henrik. I can see an explosion in the scope of responsibilities for a data quality stewards looming ahead and you highlight this with your flight crew analogy. However, I see that the logical progression is for software solutions to step in to take the strain too, and help with the management of these new and somewhat disparate challenges. With Big Data, the same techniques used for Master Data Management probably won’t scale effectively. A successful steward role needs become more about administration of the processes, rather than the data.

Talking from my experience on the software side, with the onset of increased usage of external data, it’s imperative that any process that is in place is agile, using techniques such as in memory processing, machine learning and semantic annotation to aid with a fast turn around and easy data identification.

I’m a proponent of what I like to call ‘Live Data Quality’, a process whereby data quality is ‘always on’ for when dealing with interaction with the master data sources. This is distinct from ‘real time data quality’ as with a real time process, it’s the delivery mechanism that is immediate, but not the evolution of rules, relationships and results. The concept of Live Data Quality is something that reacts to stimuli such as updates from reference sources, relationship discovery or the inclusion of new data to offer continual refinement of the rules, the results and the reporting methods.

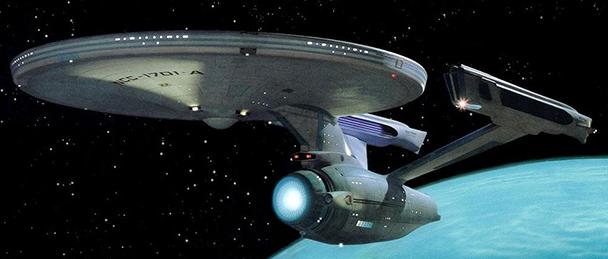

This makes the steward responsible for the monitoring of the overall process of data quality rather than the management of each individual data set. This will in turn, allow for better reporting, for actionable results to be realized more quickly and for performance to scale to cope with challenge of varied and sizable external reference data. After all, the decisions of data utilization are usually made at a more senior level. What we are dealing with here is Scotty in the Enterprise’s engine room, where increasing demands are inevitably met with ‘I’m givin’ her all she’s got Captain!’

Finally, taking your Star Trek analogy one step further, if we use the traditional models to deal with external Big Data, then we face the ‘no win’ scenario. I’m more a fan of Captain Kirk’s solution to the Kobayashi Maru, which is to redefine the problem to ensure success and I believe ‘Live Data Quality’ is at least a step in the right direction.

Thanks a lot for good comment Simon. Yep, the data steward will need some cutting edge tools out there.