Most Master Data Management implementations revolve around the business partner (customer/supplier) domain and/or the product domain. But there is a growing appetite for also including the finance domain in MDM implementations. Note that the finance domain here is about finance related master data that every organization has and not the specific master data challenges that organizations in the financial service sector have. The latter topic was covered on this blog in a post called “Master Data Management in Financial Services”.

In this post I will examine some high-level considerations for implementing an MDM platform that (also) covers finance master data.

Finance master data can roughly be divided into these three main object types:

- Chart of accounts

- Profit and cost centers

- Accounts receivable and accounts payable

Chart of Accounts

The master data challenge for the chart of accounts is mainly about handling multiple charts of accounts as it appears in enterprises operating in multiple countries, with multiple lines of business and/or having grown through mergers and acquisitions.

For that reason, solutions like Hyperion have been used for decades to consolidate finance performance data for multiple charts of accounts possibly held in multiple different applications.

Where MDM platforms may improve the data management here is first and foremost related to the processes that take place when new accounts are added, or accounts are changed. Here the MDM platform capabilities within workflow management, permission rights and approval can be utilized.

Profit and Cost Centers

The master data challenge for profit and cost centers relates to an extended business partner concept in master data management where external parties as customers and suppliers/vendors are handled together with internal parties as profit and cost centers who are internal business units.

Here silo thinking still rules in my experience. We still have a long way to go within data literacy in order to consolidate financial perspectives with HR perspectives, sales perspectives and procurement perspectives within the organization.

Accounts Receivable and Accounts Payable

The accounts receivable data has an overlap and usually share master data with customer master data that are mastered from a sales and service perspective and accounts payable data has an overlap and usually share master data with supplier/vendor master data that are mastered from a procurement perspective.

But there are differences in the selection of parties covered as touched on in the post “Direct Customers and Indirect Customers”. There are also differences in the time span of when the overlapping parties are handled. Finally, the ownership of overlapping attributes is always a hard nut to crack.

A classic source of mess in this area is when you have to pay money to a customer and when you get money from a supplier. This most often leads to creation of duplicate business partner records.

MDM Platforms and Finance Master Data

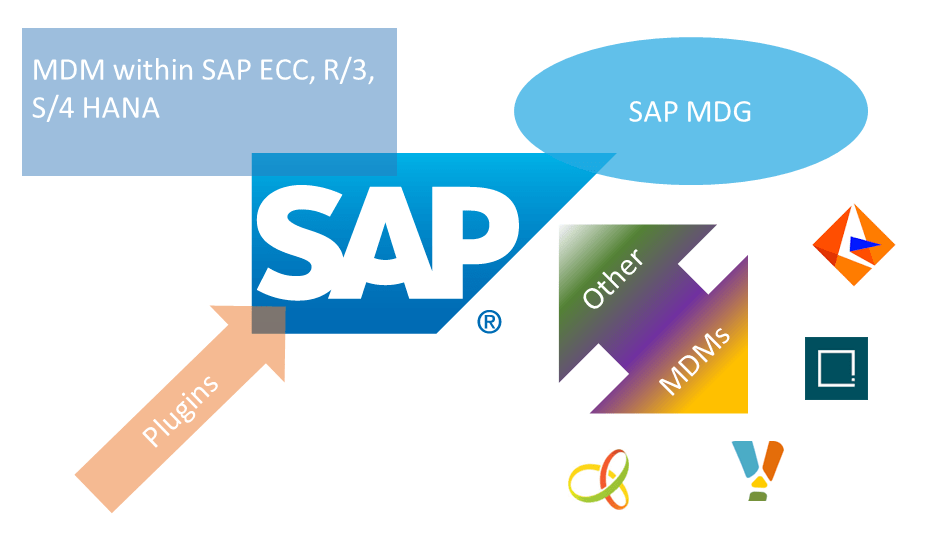

Many large enterprises use SAP as their ERP platform and the place of handling finance master data. Therefore, the SAP MDG-F offer for MDM is a likely candidate for an MDM platform here with the considerations explored in the post “SAP and Master Data Management”.

However, if the MDM capabilities in general are better handled with a non-SAP MDM platform as examined in the above-mentioned post, or the ERP platform is not (only) SAP, then other MDM platforms can be used for finance master data as well.

Informatica and Stibo STEP are two examples that I have worked with. My experience so far is though that compared to the business partner domain and the product domain these platforms at the current stage do not have much to offer for the finance domain in terms of capabilities and methodology.